The 11th edition of EuroDIG, the pan-European Internet governance event, is taking place from 19-20 June 2019 in The Hague. You can expect sessions on technical and operational issues, cybersecurity, access and literacy, human rights, media and content, innovation and more. RIPE NCC staff at the event will be live-blogging key moments. Come back to this page for regular updates on the issues, arguments and ideas discussed over the course of the meeting.

21 June 2019, 9:00 CET

Day 2: It's a Wrap!

It's been two very full days of workshops, panels, plenaries and discussion - with a little fun on the beach thrown in for good measure (did we mention there were ice cream cones at the social event on Wednesday night?)

The discussions that took place involved stakeholders from business, industry, government, civil society, law enforcement, academia and inter-governmental organisations, and covered topics as varied as artificial intelligence, human rights, DNS over HTTPS, cybersecurity, national sovereignty and climate change. Whew!

We hope you've found this blog useful and would love to hear your thoughts - please leave a comment below.

Oh, and we'll be doing this all over again next year at EuroDIG 2020...in Trieste!

- Suzanne

20 June 2019, 17:00 CET

Day 2: A Chain is as Strong as Its Weakest Link

How should we prepare to face tomorrow’s cybersecurity challenges? How can regulation address rapidly changing technology? These hefty issues with no clear-cut answers kept us busy during a cybersecurity debate just before lunch.

One of the problems panelists kept bringing up was the lack of (economic or other) incentives to address security breaches when they don’t have a direct impact on the consumer individually. The personal perception of risk is low and not matched by reality.

Think of a webcam getting recruited into a bot army: the consumer wouldn’t feel responsible, is likely not going to experience the effects and might not ever find out about it. Another example is that of publishing cyber breaches. Companies are afraid of revealing that their systems and databases have experienced a security breach, possibly because they are afraid of a fine, the damage to their reputation, or the loss of trust in their products or services.

Yet this lack of sharing impedes the company's (and everyone else’s) ability to learn from mistakes, stops users from taking action to protect themselves, and ultimately hampers the ability to bring criminals to justice and prevent them from carrying out similar attacks in the future.

RIPE NCC's Marco Hogewoning calls for the need to educate and equip institutions to enforce existing rules and regulations

So how do we change the incentives? How do we shift the focus from an outward security mindset (protecting the good guys from the bad guys) to an inward security mindset (realising that you, as a good guy might be bad due to lack of care or knowledge)? Tougher fines don’t seem to be the right answer.

While the room agreed that cyber attacks cannot be totally avoided, online hygiene was singled out as paramount for substantially reducing the risk. A chain is only as strong as its weakest link. Society needs to realise that the current system is not prepared for the challenges of the information age and discuss a fundamental reform of the system.

Finally, there was an interesting discussion on whether we should regulate before things go wrong, or once they do. On the one hand, we don’t want to live in a world where a plane manufacturer waits for two planes to crash before installing an update. On the other hand, big disasters are usually a consequence of several things going wrong at the same time, so increasing online literacy and establishing a system of checks and balances might be a better answer.

- Gergana

20 June 2019, 17:00 CET

Day 2: When it Comes to Combatting Online Harms, There’s No One-Size-Fits-All Approach

This session on tackling online harms, which included the RIPE NCC’s own Chris Buckridge as one of its panelists, highlighted how many different aspects are involved in such a broad-reaching issue.

An interesting concept that came up during this and several other sessions was that of duty of care, which is something the UK government is enacting in their own tactics for combatting online harms. Specifically, they’re looking to the existing health and safety act as a starting point. In response to a question that used the analogy of the level of duty of care that would be needed in safeguarding the public at a community swimming pool versus that required at a private hotel pool, it was pointed out that indeed varying proportionate measures would be needed in different situations and for different audiences (adults vs. children, for example).

Other concepts that came up included the need for better digital literacy so that users can protect themselves against online harms, although another point was made that we should be careful to not position online users as only potential victims but as potential bad actors, and incorporate the need for education to prevent this as well.

Another contribution – one that came up in several sessions throughout the conference – was that existing human rights frameworks have a role to play in protecting users online.

- Suzanne

20 June 2019, 16:30 CET

Day 2: Has the Era of Self-Regulation Ended?

A lot of interesting questions were raised during the session on whether regulation can play a role in ICT innovation:

- How much can industry be relied on to self-regulate?

- How do we build in best practices by design in the early stages of ICT development?

- Do emerging technologies such as IoT need a new regulatory framework, or are existing ones able to tackle the challenges they pose?

During an event like EuroDIG, which is focused on dialogue (it’s in the title, after all) rather than decision-making, a lot of sessions tend to raise a plethora of questions and spark interesting discussion without necessarily offering up a lot of “answers”. This is understandable – the concepts being discussed are complex and nuanced, and the whole purpose of EuroDIG is to give everyone a voice – and this session in particular was meant to be continued at the upcoming Internet Governance Forum in Berlin in November, so stay tuned.

- Suzanne

20 June 2019, 11:00 CET

Day 1: Is GDPR Living Up To Its Promise?

Has this new legal instrument solved all the issues it was envisaged to?

In this session, participants discussed how GDPR applies to different technologies and communities, the challenges that various stakeholders have been facing in the year since its implementation, and what needs to be done to further ensure it lives up to its full potential.

Although the regulation was meant to ensure maximum harmonisation, there is clearly still legal uncertainty with regards to its implementation and interpretation. In addition, more training is required to raise awareness about individuals' rights - particularly vulnerable groups of society - and the protection this law can offer.

With GDPR becoming an international standard and more and more countries aiming to adopt their own equivalent data protection laws, it is paramount that higher consistency on the implementation and interpretation of the law is achieved across the EU member states, as well as the enforcement approach followed by the data protection authorities.

Clearly, there's a long way ahead.

- Maria

20 June 2019, 7:30 CET

Day 1: Is Consolidation a Bad Thing?

An interesting discussion spun out of the observation that the Internet is seeing more and more consolidation. Starting from some observations made by ISOC and some of the thinking going on in the IETF and IAB, the question on the table was what the Internet technical community can do to mitigate any negative effects from consolidation.

While there was a relatively quick consensus of “not much”, the bigger question might be whether it is actually up to the technical community to find a solution. As one of the participants provided an example referring to messaging, it is more a matter of not being able to connect to a platform than it is a lack of (open) standards. In other words, many of the observed problems are of a political rather than technical nature.

From there we jumped into the regulatory space and the application of anti-competition laws to curb the power of certain large market players. One of the things that became obvious is that, to a certain extent, current legislation might not be fully compatible with today’s reality. The regulators mentioned that this caused them to investigate whether there is a need to introduce more ex ante tools that can be used. Such measures would enable them to tell a particular company to open up a certain platform or interface, before there is actual damage done.

There was also a clear request for help from the regulator to the technical community, especially in indicating when something is not a technical problem, indicating that indeed there is a need for better cooperation and coordination between stakeholders.

Being asked whether people saw consolidation as a problem, one participant made an interesting intervention that this is a matter of ethics in the sense that not all consolidation is immediately bad, but it is the underlying reasons such as customer lock-in or increasing profit that actually makes it a bad thing.

As an emerging topic, we expect the debate to continue, not only at IGF but also in venues such as the IETF and the upcoming IAB workshop.

- Marco

19 June 2019, 18:30 CET

Day 1: Technology Respecting Human Rights

The need to address human rights in the online world has taken central stage on many platforms and EuroDIG was no exception. So how do we do it effectively?

Panelists acknowledged the need to find a balance between, on the one hand, protecting personal data and respecting privacy and, on the other hand, developing artificial intelligence through big data (and not losing competitive advantage to countries in the rest of the world with fewer protections).

The panel agreed that regulation can't stop bad actors, but that shouldn’t stop us from addressing the issue of human rights in technology

The panelists referred to the existing frameworks protecting human rights (offline) and argued that those rights should be protected the same way online. Yet, inexplicably, this is often not the case. The example of the Irish referendum on abortion came up, when the government did not regulate online advertisements the same way they did offline advertisements. Tech companies voluntarily stepped up - Google decided to not publish advertisements after a certain time and Facebook decided to not accept advertisements sources from abroad (which was still easy to circumvent).

Businesses, governments and users all have a piece of the puzzle and share part of the responsibility. To this end, a gap that needs to be urgently addressed is raising awareness and educating users of their rights while empowering them to act.

- Gergana

19 June 2019, 14:30 CET

Day 1: Code Makers vs. Law Makers

How do you balance national laws with conflicting global Internet principles? Who gets to make a final decision when security and freedom of information needs clash? And how do you enter into dialogue with different stakeholders when they speak different (technical) languages?

The session on the intersection between public policy and technical standards covered all of these topics, with a focus on the role played by code makers vs. law makers. Peter Koch of DENIC explained how, in the early days of the Internet, the code makers developed the Internet with nearly total freedom before regulation ever came into play, and the different ways in which standards development has evolved over time as the Internet has become an ever-increasingly powerful technology.

The RIPE NCC's Chris Buckridge led the panel discussion

Although examples were given where the courts may need to ultimately decide between clashing principles (such as the United States ruling on the right of the New York Times publishing the Pentagon Papers in a classic example of security vs. freedom of information), the general consensus was that policymakers, the technical community and civil society all need to engage in open dialogue, learn more about the complex issues they’re engaged in and the implications of their work, and build a relationship of trust.

After all, standards are useless if they’re overruled by regulation, and regulation is useless if it isn’t recognised and adopted by the technical community.

- Suzanne

19 June 2019, 13:00 CET

Day 1: GDPR: “Enforcement takes times, but enforcement is coming”

It takes effort to gain privacy. Since the General Data Protection Regulation (GDPR) came into effect last year, data protection authorities throughout the EU have been busy trying to enforce the wide-reaching regulation. Data protection authorities have received 280,000 cases, about 144,000 of which were complaints brought by individuals, resulting in fines totalling more than €56 million.

Some of those high-profile cases include France’s data protection authority fining Google €50 million, and the Portuguese authority fining a hospital over a lack of biomedical data protection.

There’s been a trend towards increased privacy-friendly browsers, add blockers and slowly improved cookie walls that are more inline with GDPR. However, there are still consent mechanisms not in compliance with GDPR – or others that highlight the unintended ways in which some organisations have sought to comply with the regulation, as seen in the example of the Washington Post making its content free if you agree to its terms, resulting in the concept of “privacy for the rich”.

In the view of the session’s presenters, however, GDPR has generally been positive in forcing organisations to review their data and how it is stored and processed, although they noted how burdensome this job has been for smaller enterprises. They also noted that the data protection authorities need to balance freedom of information (which they’re often also responsible for) and privacy protection.

As usual when it comes to technological regulation, the issue is complex. For more information on what’s happened since the regulation’s implementation, check out this report by the European Data Protection Board.

19 June 2019, 13:00 CET

Day 1: Internet Governance Needs Efficiency

Listening to Mariya Gabriel, the European Commissioner for Digital Economy and Society, is always inspiring to me: a politician praising the multistakeholder approach, instead of hogging power for government. However, it was not all flowers and rainbows, as she was very critical of the current model’s lack of efficiency. What concerns her is the insufficient partaking and engagement of some stakeholder groups, especially government and business; the insufficient funding for IG events and their undefined agenda. More concretely she called for:

- A strategic, multi-year programme with the goal to agree on shared principles and norms

- Strengthening the national and regional IG initiatives in terms of capacity building, awareness raising and especially the elaboration of shared issues

- Involving Internet innovators in the policy debate, so they can offer practical solutions to policy issues

Mariya Gabriel, the European Commissioner for Digital Economy and Society, calls for more efficiency in the Internet governance multistakeholder model

Finishing her talk, Commissioner Gabriel stressed that policies can be made innovation-friendly without losing their legal certainty and she underscored the importance of including and cooperating with developing countries, especially the Western Balkans, who are geographically and politically close to the EU.

- Gergana

19 Jun 2019, 11:30 CET

Day 1: EuroDIG Takes the Dutch Approach with "Polderen"

You can’t start a conference in the Netherlands without introducing participants to the most common governance model in the Netherlands: “polderen”. Rooted in the country’s history of its ongoing battle against the rising water level, it describes the age-old tradition of finding consensus among all stakeholders on a crucial issue – namely, the water level between dikes. Over time, this principle has been used in many governance debates, whether it is about social security or corporate governance. The benefit of such a model is that consensus is adopted voluntarily, as it benefits everyone.

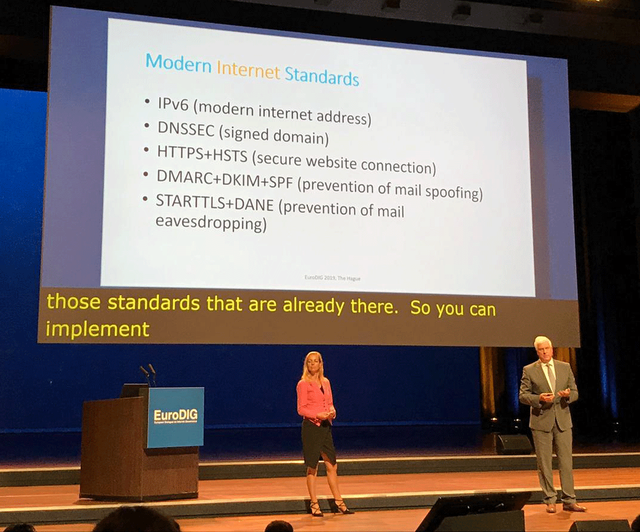

More recently, this same model has been applied to the Dutch Internet. Rather than seeking regulation, the Platform Internetstandaarden (of which the RIPE NCC is a member) brings together a number of stakeholders to reach a common goal: get Dutch Internet users, including the government, to apply modern Internet standards such as IPv6, DNSSEC, HTTPS, TLS, DKIM and DMARC. This is done the “Dutch way”, by talking to the people involved and not only explaining the benefits, but listening to them as well and taking note of what is holding them back.

IPv6 was (literally) centre stage during EuroDIG's opening session

Because feedback indicated that people wanted a benchmark, the platform took it upon itself to create a test bed to provide transparency and allow people to test their own domains. Hiding under http://internet.nl is now a web portal that allows you to test your website, your Internet connection and your email and see how much of these new standards are properly configured and active for your domain.

Give it a try yourself. Over the years we have seen a number of organisations in both the public and private sectors make some great strides in deploying these standards, with the help of the platform’s expertise and a little motivation. It seems that after all these years, even when applied to a totally different issue, the Dutch approach continues to work.

- Marco

19 Jun 2019, 10:30 CET

Day 0: To AI or Not to AI…

Never let it be said that Internet governance doesn’t have a sense of irony…

During the preparatory meeting for EuroDIG 2019, which took place in January of this year, I made a comment regarding the number of EuroDIG proposals related to artificial intelligence (AI). My comment was (to paraphrase): AI is not the Internet - do we really want to spend a significant portion of a two-day Internet governance discussion talking about something only partially related to governance of the Internet? Particularly when that topic (AI) has so many of its own deep and unresolved governance issues?

So, of course on Day 0 of EuroDIG, I found myself moderating an hour-long discussion of AI and its place in Internet governance discussions. Alongside my co-moderator from the Dutch Ministry of Economic Affairs and Climate Policy, we led a group of around 25 people in a freewheeling exploration of what place AI has in Internet governance discussions.

While the outcomes were hardly surprising, they are worth noting:

- There are aspects of AI governance that potentially intersect with Internet governance - AI instances often obtain information via the Internet, or communicate with other AI instances via the Internet. But should the risks inherent in those activities be managed via Internet governance, or via governance of AI development?

- AI is often identified as (part of) the solution to Internet governance challenges, particularly when those challenges are characterised by significant amounts of data, so there is a need to understand the technical or policy challenges that such solutions may entail.

- While many other issues intersect with Internet governance (trade, environment), AI is notable in that it doesn’t have a separate, clearly identifiable venue for governance and standardisation, increasing the temptation to dig into AI governance questions within Internet governance discussions.

- That lack of a central AI discussion venue has meant that many of the standardisation or governance efforts relating to AI (and there have been a lot!) have come from government bodies (up to and including the UN). Is there a need for (or even the possibility of) exporting the multistakeholder Internet governance approach to the AI ecosystem?

It’s clear that, in many ways, this is more of a discussion about Internet governance than about AI - which is appropriate, given that the majority of participants in Internet governance discussions are not AI experts. While the overlaps and intersections are unavoidable, careful consideration of what constitutes “Internet governance” is important to how effective events like EuroDIG can be.

- Chris

18 Jun 2019, 18:35 CET

Day 0: Ethical Considerations for IoT

During this session, which took place the day before the official start of EuroDIG, we discussed what ethical considerations are important for the development, deployment and use of IoT, what prerequisites are important from a security perspective and what issues might become relevant in the future.

Frederick Donck, ISOC, commented that cost-saving incentives for manufacturers mean a lot of devices reach the market without sufficient security and privacy, and that consumers need help to exert pressure on manufacturers. For those of you who want to delve deeper, take a look at ISOC’s report focusing on five key issues: security, privacy, interoperability and standards, regulation, and emerging economies and development.

Jonathan Cave, Alan Turing Institute, brought up a few different points. First, the mechanisms we have for finding new ways of governing ourselves might not function so well in the IoT world. Second, he questioned frameworks giving users informed consent and choice. He doubted the real choice consumers have and also to what extent their choice is informed. He argued that a user shouldn't be expected to take responsibility over something complex by nature and that doing so can be used as a tactic by manufacturers to shift responsibility to the consumer. (This immediately makes me think about yesterday’s ridiculed, and quickly deleted, tweet by a TV manufacturer encouraging its customers to regularly check their devices for viruses.)

Unsurprisingly, proposals to introduce certification for IoT devices came up. Some worried that these will produce out-of-control bureaucracy, which would preclude SMEs from getting in the game. Arthur van der Wees, founding member of AIOTI, suggested that certification should achieve a tradeoff between the purpose/use of the device (lower security for non-essential devices), the cost of implementation (some measures can be quite expensive) and impact (toys may seem innocuous, for example, but can have a big impact on our children).

Maarten Botterman, IGF Dynamic Coalition on IoT, suggested we need meaningful transparency, clear accountability and real choice – and cautioned that although there are diverging ethics in the world, we still need to converge on the frameworks we build before the technology advances so much that it becomes too late.

- Gergana

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.