Olivier Crepin-Leblond from the Internet Society chapter in England is announcing the results of a tool they designed and implemented. It is an “IPv6 crawler" that crawls through the DNS to detect IPv6 compliant servers. The results were interesting and can be viewed on an "IPv6 Matrix" showing IPv6 penetration on geographical maps.

Note: This article has been submitted by Olivier Crepin-Leblond.

Introduction

During the INET London conference, I received feedback on a recent project, where some European Internet Exchange Points noted slightly higher than average UDP traffic on their networks. Even though I am not 100% sure about this, I suspect that this might be caused by our IPv6 Matrix Crawler which we are running pro-bono to track the spread of IPv6 content.

I presented some of the results at the conference which was hosted in London by the Internet Society on 29 September 2010 and would also like to describe the project here on RIPE Labs:

Background

The Internet Society's England chapter was awarded a Community Grants Programme award in November 2009, for the design and implementation of an “IPv6 crawler”. This is a tool that crawls through the DNS at pre-set intervals in order to detect IPv6 DNS servers, IPv6 compliant web servers, SMTP mailers, and NTP servers, among others.

The search for project partners led to cooperation with Nile University in Egypt. Professors and their research assistants wanted to learn more about IPv6, and took on the task of writing the software required for the project.

In the meantime, the London team of partners built and installed two servers and a router at Telehouse East, one of the UK's most connected facilities. One server was set up as a crawler, and one as a web server and information storage, both connected to the Internet backbone via dual-stack IPv4/IPv6.

Running the IPv6 Crawler

The list of domains tested periodically consisted of 980,000 domains supported by nearly 5.6 million hosts (the list of domains was based on the world's one million most popular websites, as referenced by Alexa ).

Results were surprising, and showed how little dual-stack IPv4/IPv6 was supported by major web sites. Without content, it is no wonder that the current volume of IPv6 traffic on the Internet is so low! The results were saved in text files and SQL databases were created for easy interrogation.

Results

On the IPv6 Matrix website you can see an example of a front end. It accesses the databases, and displays results using the Google Charts APIs. We are planning to document the SQL database structure to allow other projects to access and retrieve data directly from the databases.

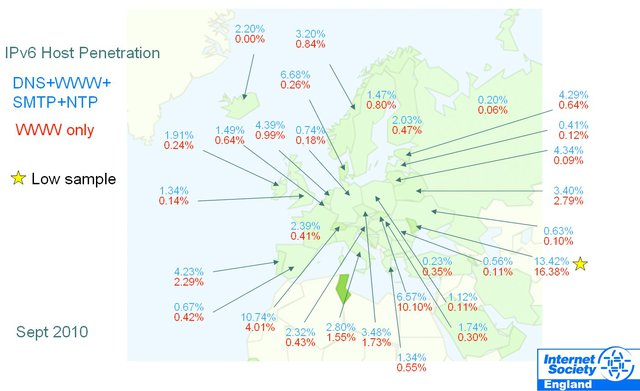

Figure 1: IPv6 Host Penetration in Europe

Currently, a full scan of all TLDs in the database takes just over a month, and results are stored for future analysis. The hope is that a growth trend of IPv6 will be seen in a few months. In the long term, the collected data will enable researchers to find out exactly how major new technologies, such as the migration to IPv6, spread on the Internet. This will then also help to answer questions relating to finding the early and late adopters of new technology.

In the short term, the maps (see an example in Figure 2 below) show a snapshot of real world IPv6 penetration data at a glance, and detailed results are also made available, helped by a system of user-configured filters.

At INET in London, I presented some more results for Europe and Asia as of September 2010 (see IPv6 Matrix Project slides ).

One final note, before you ask: we received nine enquiries (out of 980,000 domains) about firewalls erroneously detecting a port attack of some sort during the scanning process.

These are the only ports which the Crawler tests connectivity to:

- Port 25 (SMTP)

- Port 53 (DNS)

- Port 80 (HTTP)

- Port 443 (HTTPS)

- Port 123 (NTP)

This generates an insignificant amount of traffic in the order of a few bytes only. Let me make this clear: we are not performing port scans.

Next Steps

At this early alpha (or beta) stage, we are looking forward to any feedback regarding the data we collect. Please also point out any bugs you encounter, so that the development team can fix them.

On the question of accuracy of the results, we found that results are less accurate in TLD samples under 900 domains. Larger TLD samples increase accuracy. We therefore appeal to any Registry whose zone data sample size is less than 1,000 domains to contact us, if interested, to provide a wider sample of input domains for the zone they are responsible for.

Comments 2

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Anonymous •

Note that it would have been useful to mention similar tools such as:<br /><br />* Hurricane Electric <a href="http://bgp.he.net/ipv6-progress-report.cgi" rel="nofollow">http://bgp.he.net/ipv6-progress-report.cgi</a><br /><br />* DNSwitness <a href="http://www.ripe.net/ripe/meetings/ripe-57/presentations/Bortzmeyer-_A_versatile_platform_for_DNS_metrics_with_its_application_to_IPv6_.Glve.pdf" rel="nofollow">http://www.ripe.net/ripe/me[…]plication_to_IPv6_.Glve.pdf</a>

Anonymous •

Thanks for pointing these out, Stéphane. Would it be useful to describe these in some more detail on RIPE Labs? <br /><br />Mirjam