The 12th annual meeting of the Internet Governance Forum (IGF) runs from 17 - 21 December 2017 in Geneva, and the RIPE NCC will be liveblogging key moments from the event. Check back on this page from Sunday afternoon for regular updates on the issues, arguments and ideas from RIPE NCC staff and RIPE community members.

22 December 2017, 12:00 UTC

Day 5 (After the Event): Addressing the Content Side of IPv6

Hi, it’s Ragnar again. One of my goals of attending the IGF was to try and understand why IPv6 adoption is taking so long, from the policymaker’s point of view. Being an engineer, and knowing that IPv6 is relatively easy to implement from a technical perspective, I’m often wondering why there is so little growth in IPv6 traffic.

Over the years, we’ve seen that a number of companies and governments have implemented IPv6, and these stakeholders have been very important for its progress. But if we try to analyse why they have invested the time and energy into deploying IPv6, in most cases it really boils down to a kind of idealism on the part of the persons who made the decision. And whether or not these idealists are located in the correct layer of management has ultimately dictated whether an IPv6 implementation has been carried out in a certain company or region. More recently, adoption of IPv6 has also been driven by policymakers and regulators that understand the importance of a timely rollout.

However, in the meetings and sessions I attended at the IGF, the discussions were mostly focused on the End User and the service provider. It seems that most people are under the impression the if ISPs would just enable IPv6, all our problems will be solved. But is that the whole story?

What people often forget is that IPv6 adoption is an end-to-end strategy. You need to enable End User equipment, the home routes, the central networking equipment, all transport networks, data centers and content servers. From my point of view, there were very few who addressed the other half of the equation – content providers. According to Cisco’s 6labs website, at 29 September 2017, only 33% of the Alexa Top 500 websites were IPv6 enabled. The estimated IPv6 traffic volume is currently around 50%.

As Ronny Vanningh from Proximus mentioned in his presentation at a session on the impact of CGN, we must also remember to focus on content and content providers. If there is no IPv6 content, there is no need for IPv6 on the End User’s side. In Norway, 36.6% of the Alexa Top 100 website are IPv6 enabled, but many of the larger content providers still do not support IPv6, including most government sites. So, for Norwegian users with IPv6 enabled, there is still a lot of local traffic going over IPv4.

For many years, people have been looking for a clear business case or “killer app” for IPv6. More recently, one business case has been emerging, especially for ISPs that don’t have an abundance of IPv4 address space. For these ISP's, CGN or any other transition technology will drive up the cost for Internet services, unless they do IPv6. However, considering the points discussed earlier, turning on IPv6 will not solve the CGN problem. An ISP still needs to forward IPv4 packets which are around 50% of the ISP's traffic, and the ISP needs to bear that cost. The only solution for this is to move more traffic over to IPv6 – but there is little the ISP can do about this until the content is there. So how can we get content providers to switch on IPv6?

Europol and national police authorities are also looking at the CGN problem, which is increasingly interfering with their criminal investigations. They rely on logging by both the ISP and the content provider to solve these cases, but this has become more difficult now that CGNs are involved. Europol is engaging with the Internet community to look for ways to reduce the need for CGNs.

However, Europol’s message is also one sided. They want ISPs to turn on IPv6 for End Users so they can more easily identify the perpetrator in criminal cases. At the IGF session, they showed an example of someone trying to sell an AK-47 assault rifle on a used gun website. They were not able to identify the seller because they were behind a CGN. They were under the impression that if the seller had been on IPv6, they would have found him more easily. But they are also missing the point – an IPv6 session has two sides – the End User and the website. If the website is not on IPv6, how would they be able to identify the End User? That person would still be hidden behind a CGN.

The motivation with this blog post is to hopefully raise awareness that we also need to be addressing the content side as well. My message to both the technical community and governance community is that while addressing the ISP side is important, just as much focus needs to be given to content providers and data centres. For IPv6 to work, the whole value chain needs to make the transition.

- Ragnar Anfinsen

21 December 2017, 10:00 UTC

Day 4: Security is Always Excessive... Until it's Not Enough!

Recent attacks have led governments to conclude that they need to increase cybersecurity, and fast. In a blogpost from yesterday, you can read more about the rapid establishment of CERTs. Today’s session on multistakehoder collaboration in cybersecurity response gave an overview of what different countries are doing.

South Korea established KITA (the Korean Internet and Security Agency) in 2004. The agency maintains the Korean numbers space and the .kr ccTLD, runs the CERT, and provides a host of other activities such as promoting safe Internet use to citizens, helping telcos detect threats on the web and capacity building. The speaker underscored the importance of cooperating with other countries on cybersecurity issues and explained that when doing so, it is very important to keep in mind that their regulatory environment and way of working is different.

Rwanda started its cybersecurity development in 2009, and with the help of no other than South Korea’s KITA. The government worked with KITA to establish a national cybersecurity strategy and build capabilities to protect critical infrastructure. A few years later, they established a CERT and defined the public key infrastructure. Admitting their inexperience, they made training their personnel a priority. Now, already established, Rwanda is actively cooperating with neighbouring countries and organising and participating in regional cybersecurity drills.

The private sector owns the majority of Internet infrastructure and no cybersecurity strategy would be complete without their involvement. Microsoft reminded attendees at the session that they started working on cybersecurity in the early 2000 and have plenty of experience to offer. They urged countries come to an agreement to enable a global approach to counter cyberattacks and establish a neutral independent attribution organisation.

- Gergana

20 December 2017, 17:30 UTC

Day 3: Artificial Intelligence in Asia: What's Similar, What's Different? Findings from our AI Workships

When Western societies think of AI, the first things that comes to mind are scenes from Terminator or Ex Machina. As this session covered, Asian societies think about AI differently.

Speakers presented some examples that articulated the Asian understanding of AI: Several years ago some people in Japan held funerals for robot dogs after Sony let them die; People in Asia are curing their loneliness by having robot companions, which can become one’s replika by learning from over time; Babysitting robots are a child’s teacher, friend, and sibling all in one; psychologically vulnerable Asians feel more comfortable talking to a robot that won’t judge them. These examples show that an AI with a personality is not such an unusual concept in Asia.

Speakers then discussed some of the utopian and dystopian scenarios of AI. AI could revolutionise the education and health systems to produce tailor-made programs and treatments; training data sets could have an expiration date; AI systems could be deliberately built rather than the notion of “AI first, everything else later”; and “data socialism” might be a way to allow equal access to data sets so that future AI systems are not monopolised by a small number of players.

These discussions proved that even though the technology is the same, people are using it in different ways, which creates these different levels of interactions. In sum, it wouldn’t be wrong to say that AI is a different reality in different parts of the globe – while in Western societies it is seen as something to be afraid of, in Eastern societies it’s trusted enough to take care of babies.

- Elif

20 December 2017, 17:30 UTC

Day 3: A Fair Internet

In the afternoon of Day 3, I attended two sessions - on gender inclusion and on artificial intelligence (AI) for equity and social justice - dealing with ways to use the Internet and ICT to create a better and fairer world for everyone.

Both sessions debated whether the online world is more or less fair than the offline world. On the one hand, characteristics such as gender, race, religion and so on, might not be immediately observable in the online world, makeing it a somewhat less discriminatory place. On the other hand, once users are not anonymous and once these characteristics are known, discrimination and violence can spread much faster, much further and last much longer than in the offline world.

The Internet, as well as artificial intelligence, reflect the inequalities in human society. Both panels recognised that technology can either amplify or reduce these inequalities depending on how they are used.

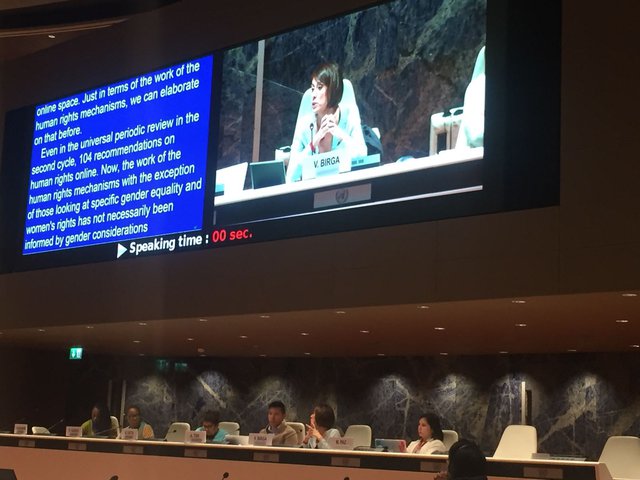

Veronica Birga from the Office of the United Nations High Commissioner for Human Rights: “The use of ICT has become critical for the realisation of certain human rights. Women should be looked as subjects of rights, rather than objects of protection.”

There are two obstacles to fair technology: biases and interests. Biases are inefficiencies that can be corrected with the right data (should there be will for it!) Interests are (on purpose) inefficiencies that are designed due to the benefit of the technology producer – for profit etc. Needless to say, these are more difficult to counter.

The panel on AI was optimistic that AI will help humanity solve the problem of production, but it would take concentrated willpower and determination to solve the problem of fair distribution. It also cautioned that sometimes the most efficient or easy solution is not the fairest one. The panel concluded by calling for more transparency in algorithms, so we can see how they affect our lives and we can control how they change society.

- Gergana

20 December 2017, 16:00 UTC

Day 3: We Almost Need a Miracle

Over the past year the OECD, led by Bengt Mölleryd, has been studying technology and policy measures that can increase broadband access in rural and remote areas. This was the primary driver behind the workshop I attended. The same goal is also represented in the UN’s Sustainable Development Goal 9.C, which calls to "Significantly increase access to information and communications technology and strive to provide universal and affordable access to the Internet in least developed countries by 2020”.

That was two years ago, and while the goal itself isn’t qualified, in the words of Ms Bogdan-Martin, Chief of Strategic Planning at the ITU: “We almost need a miracle”. And we indeed still have a long way to go to connect every citizen to the Internet.

Statistics from developing countries show that while many people now posses a phone, not many own a smartphone. More importantly, there is a significant gap between urban and rural areas in the number of people who own smartphone.

Apart from general awareness, people often stated that low speed and high cost is a significant barrier to adoption. Critically, they also flagged the stability of rural connectivity was also a contributing factor. After all, what use is a smartphone when the connectivity keeps dropping? Somewhat surprising was that a general lack of awareness about what the Internet is or its usefulness for day-to-day business was also cited as a reason why people are not online. As it was pointed out, price alone is not the only factor that influences Internet adoption and whether people can get a smartphone and get online.

It was flagged that governments can play an important role in making the Internet more useful, for instance with e-gov services that encourage citizens to go online for basic things like registering child births.

During the session it was quickly agreed that the current broadband definition of 256 kb/s is outdated and insufficient for current applications. At the same time, it was noted that throughput alone is not the only benchmark - having a stable and reliable service is even more important. However, several speakers agreed that a minimum of 2 mbit/s is needed to develop useful applications in developing markets.

A third pillar that was brought in to increase Internet adoption was the availability of content in local languages. This is an issue that’s also been discussed in the technical community for a few years now, such as with the adoption of Internationalised Domain Names and Universal Acceptance.

Summarising his study, Bengt pointed out that while demand is the principal driver for investment, policies by themselves can not create a market. Looking back at the situation is Sweden, where demand bundling did trigger what he described as “the first wave of network building”, long term maintenance is also important and is often the cause for Swedish networks to fall into commercial hands. He also stressed that policies should not undermine commercial investment and that public networks should also be opened for the market to make use of.

What became clear in this session is that we really need the users to become aware and generate demand, which in turn forms the basis for the large capital investment required. It remains to be seen if this will be enough to facilitate universal deployment to all corners of the globe and to every citizen.

The target of 2020 will indeed require a miracle, but hopefully we can see this debate turn to practical measures that encourage and enable the next billion people to get online.

- Marco

20 December 2017, 11:30 UTC

Day 3: CERTs or Politicians

In an attempt to increase their cybersecurity readiness, states have begun to develop national cybersecurity strategies, and establish CERTs (Computer Emergency Response Teams) or CSIRTs (Computer Security Incident Response Team). However, the relationship between CERTs and law enforcement agencies and intelligence communities remains unclear. Tensions have begun to emerge, as overly close cooperation with governments can undermine the independence of CERTs.

In the meantime, many CERTS and technical experts have established a community that negotiates and mediates across different cultural practices. They coordinate responses, organise exercises, support each other in handling incidents, have channels for real-time information sharing and encourage regional cooperation.

At the same time, the inability of the fifth UN Group of Governmental Experts on Developments in the field of Information and Telecommunications in the Context of International Security (otherwise known as the UN GGE) to reach consensus on a final report, speaks to the failure of international cybersecurity negotiations. It’s in this context that the idea of science-diplomacy arose – that in difficult political contexts, scientists and technical experts can often overcome the political difficulties and cooperate in a space where politicians fail.

Perhaps unsurprisingly, all panelists agreed that for CERTS to work effectively they should not be politicised and governments should not interfere with their work.

- Gergana

Day 2: Un-convention-al

From Day 0 through the Opening Ceremony and on into the morning of Day 2, a “Digital Geneva Convention” has been one of the buzzwords of this IGF. It’s a concept that’s been brewing for the better part of a year, since Microsoft President Brad Smith fired it into the global consciousness back in February with a keynote presentation to the RSA conference (and post on the Microsoft blog).

The concept is relatively straightforward:

“Just as the Fourth Geneva Convention has long protected civilians in times of war, we now need a Digital Geneva Convention that will commit governments to protecting civilians from nation-state attacks in times of peace. And just as the Fourth Geneva Convention recognized that the protection of civilians required the active involvement of the Red Cross, protection against nation-state cyberattacks requires the active assistance of technology companies.” (From the Microsoft blog post.)

This has been the first opportunity for the global Internet governance fraternity to really take the idea out for a spin - and in the eponymous city no less!

Overall, the tone of response to the idea has been positive, particularly from civil society groups (perhaps not surprising for an idea whose goal is to place limits and restrictions on governments’ right to target civilians). Responses from other stakeholder groups (and individuals) have been more mixed though. Some governments (including the Australian representative) pointed to the positive work already done by governments in this area, including the norms laid out in the 2015 report of the United Nations Group of Governmental Experts on Developments in the Field of Information and Telecommunications in the Context of International Security (thankfully shortened to UN GGE!). They also suggested that people be careful what they wish for, and that a lengthy treaty negotiation would not be conducive to developing practical, relevant solutions to a global cybersecurity situation that many see as urgently in need of attention.

Others from the technical community noted both that governments are often not the parties responsible for cyber attacks, meaning such a treaty would have limited effect, while the kind of enforceability envisioned in the proposal would require much greater accuracy and reliability in attributing cyber attacks to their perpetrators. Andrew Sullivan of Dyn pointed out that much of the “expert” analysis written online about the 2016 attacks on Dyn systems (and who was responsible) was simply wrong.

For anyone chomping at the bit to be part of this debate, have no fear: the discussion of a Digital Geneva Convention is far from over…

- Chris

Day 2: How to Confuse an Engineer!

My name is Ragnar Anfinsen, CPE architect from Altibox, a Norwegian FTTH ISP. My reason for coming into the scopes of RIPE NCC has been my engagement around IPv6 implementation in Norway. First of all, let me thank RIPE NCC for inviting me to attend this year’s IGF meeting in Geneva – here are a few of my observations.

Ingress

Where is meeting room XXVII? What is Zero-Rating? Why is an IoT device not a computer? Being an engineer at the IGF is an interesting experience, where politicians, lawyers, human rights advocates and others meet up to discuss issues around how the Internet should be governed. For me, the whole experience has been quite confusing, where you enter the UNOG building trying to understand what to do and where to go. Even before I got there, looking at the agenda with ten tracks of meetings in ten different rooms with overlapping schedules, trying to decide which ones to attend, especially based on buzzwords like Zero-Rating, multistakeholders, cybersecurity, block chain and digital dividend. Arrghhh...

Content

This IGF is not all confusion. I have learned a lot about how the people who are focused on regulation and governance think. I’ve also learned a lot about the distance between the knowledge the governance people have on technology and the knowledge the technology people have about governance.

I had one interesting discussion with a South Korean Professor of Law about net neutrality issues. He was curious to learn about the perspective of network operators on this issue. I gave him my own view, which aligns well with how we do it in Norway and in my company. The most interesting part was that he told me the South Korean government had regulated how their three major ISPs should interoperate. Basically, they had regulated that they should pay each other for the amount of traffic being sent between their networks – not the bandwidth, but rather the number of bytes.

From a technical perspective, this seems very destructive, as the smaller ISP is almost going bankrupt due to the cost of traffic. In my opinion, the regulation pushed by the South Korean government is basically inhibiting competition and promoting violation of net neutrality. Forcing an ISP to charge customers more for its service due to increased cost as a result of the regulation is really bad. Opposite from Norway, where CDNs are permitted, South Korea has deemed CDNs a violation of net neutrality – making it impossible for this ISP to reduce its transit bandwidth through the other ISPs. This is an interesting situation, where the government seems to have really misunderstood how the Internet works. Disclaimer: I do not know the background for the regulation, so I may be missing important factors.

But back to the IGF and confusion. I have heard a lot of interesting statements over the past couple of days, but the best one has to be in the Dynamic Coalition on the Internet of Things, where in the closing remarks one of the panelists stated that an IoT device is not a computer.

- Ragnar Anfinsen

Day 2: Internet of Things and Cyber Security: Will "Regulation" Save the Day?

It’s a difficult question to ask whether the IoT should be regulated, and one that raises numerous other complicated questions. This session provided a chance to try and answer these questions, with a range of participants and the speakers some of whom were in favor of regulation and others… not so much.

People who opposed IoT regulation centered their discussions around the fact that technologies and societies are not static; on the contrary, it is this very dynamic technology that undermines the effectiveness of static regulations. Also, there is a misbelief about regulations: they are perceived as a magic wand that is going to solve every problem, which has been proven to be wrong in the past. Some of the questions raised were; who are the subjects of the regulation? What are we protecting: safety of the consumers or the network? If you choose to protect one or the other, how are you going to protect the one that is left? What is the enforceability of these regulations?

People in favor of regulation stated that regulation is important; however, it is not the only tool that governments have to make the industry meet their responsibilities. It was pointed out that principles- or standards-based approaches are inevitable, which requires all of the stakeholders to take action. When considering the fact that consumers do not care about their safety, as well as the industry not wanting to take responsibility, realistic principles and standards that are enforceable across borders could save the day if applied correctly. Also, human-centric and data-centric focused approaches are needed rather than sector-focused ones when structuring regulations.

Later, it was stated that there are many regulations that already apply to the IoT, like consumer law or data protection law, so it might be more practical to focus on more specific IoT-related problems. In sum, most of the participants were in agreement that IoT-related principles and standards should be tackled at a global level that brings targeted solutions to targeted subjects and industries, and are enforceable.

- Elif

Day 2: Impact of Digitisation on Politics

When thinking how politics has changed in the past few decades, it’s impossible not to talk about the huge role the Internet has played. Digitisation has aided democracy by making it easier for people to connect, exchange ideas and gather. At the same time, informed choices underpin democracies. The rapid spread of fake news and echo-chambers are a cause of great concern for governments and lead to a lot of mistrust and fear from everyday users.

The panel agreed that there is no easy fix, but that all stakeholders have a role to contribute: media to increase the vigilance of their work, governments to provide a facilitating environment and to invest in digital literacy, the technical community to increase technical security, and citizens to critically assess the content they read.

Maria Gabriel from the European Commission stressed the importance of winning back the trust and confidence in the Internet

Malavika Jayaram from the Digital Asia Hub spoke of algorithmic fairness: how technology reflects our flawed society and can amplify injustices if left unchecked. Yet the very same technology can also be harnessed to correct these biases. Dunja Mijatovic saw a lack of digital literacy as the main problem and shared her hope that governments invest more in education, rather than forming new agencies.

The panel concluded that digitization is disrupting democracy as we know it, but that is not necessarily a bad thing: It gives us the opportunity to reflect and improve.

- Gergana

Day 2: Stop! Resolution Time?

When originally convened back in 2006 as part of the WSIS process, the IGF was set up as a “non-decision making forum”. This may sound a bit strange, but at the time this was a bit of a breakthrough and a necessary compromise to facilitate having this type of meeting at all. While many still see the IGF as a toothless animal, you might also call it a blessing in disguise.

Sure, on the one hand - why participate in a week-long meeting, knowing that no decisions will be made? But on the other hand, the IGF creates an open atmosphere where, removed from the burden of emerging from negotiations victorious, attendees might be a bit more open and forthcoming with their opinions. It allows room for manoeuvre around the political agenda that is set by the Capital.

Especially in the early days, this may have been a prerequisite for the multi-stakeholder dialogue to bloom. Everybody in their respective role and within their own mandate, and also importantly within their own authority. The Internet is made up of a variety of systems and institutions to govern them, and we need certainty that authoritative institutions (including the RIR communities) won’t suddenly be overridden by decisions made in another forum.

The main goal of the IGF is to exchange views and ideas, to serve as input to the decision-making process elsewhere. An important pillar of our participation in the past 12 years has been capacity building: explaining who the RIRs are, how decisions are made, and encouraging people and organisations to participate in our policy development process.

But as the IGF slowly evolves over time, and recognising that many saw the lack of outcomes as a deficiency, the Multistakeholder Advisory Group (MAG) has introduced working models that allow for the production of outputs. Working ahead of the annual IGF meeting, Best Practice Forums try to collect and document best practices on a number of topics ranging from cybersecurity to IPv6 adoption.

Another form is the Dynamic Coalitions, which over time have also produced a number of “living documents” such as the DC on Internet of Things in their Good Practices document.

We now seem to have reached the next stage. The new Dynamic Coalition on Trade and the Internet has just concluded drafting a resolution, which has now been agreed as the consensus outcome of the Dynamic Coalition.

It might not sound as a big thing, but in the context of the United Nations using a “resolution”, including common political language such as “recommendations” is quite a big leap from the original inception of the IGF.

I’ll come back to the importance of Trade for the Internet, one of the emerging topics of this IGF, in another blog post. But now having a group of participants, under the flag of the IGF, calling on governments to apply some mutistakeholder principles to trade negotiations, including consultations with experts and the people influenced by those policies, is a strong message and as such a big step for the IGF as a whole.

- Marco

Day 2: Back to the Roots, Visiting the Birthplace of the World Wide Web

The location of this year’s IGF, Geneva, is famous for a lot of things, of course the UN, the lake and their landmark "jet d’eau”. It’s also home to CERN, the European research centre on nuclear physics and maybe most famous for the Large Hadron Collider (LHC), a 27km particle accelerator built deep down in the bedrock.

Over the years, experiments with the LHC and all of its predecessors have yielded numerous scientific breakthroughs in the discovery of subatomic particles. As you can’t really can go out to shop to buy a particle accelerator, let alone a 27km one, all these tools have to be designed and build as part of the experiment. And throughout the years, CERN researchers have invented countless tools.

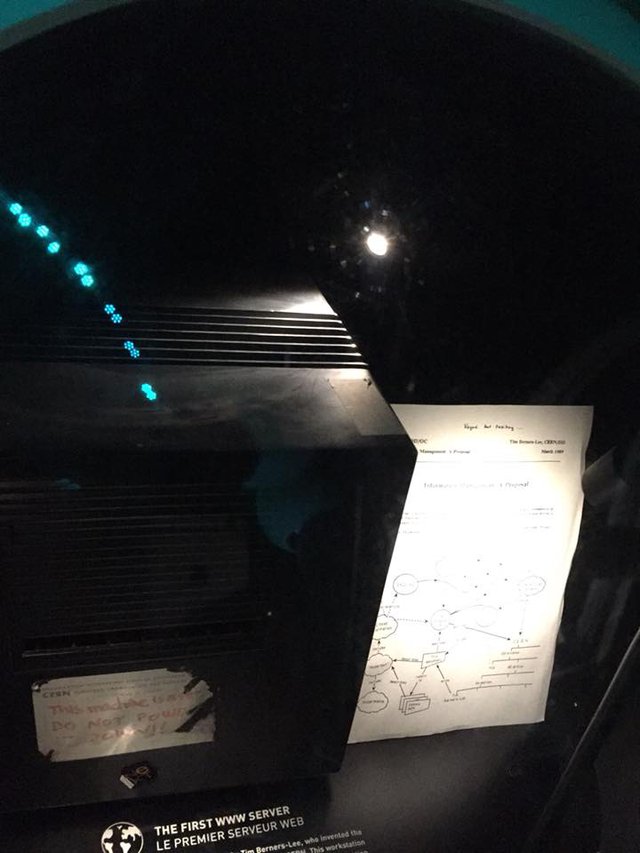

One of those inventions has turned into a tool that most of us use on a daily basis: the World Wide Web. The Hypertext Transfer Protocol (HTTP) with its ability to link from one document to others, was the idea of a CERN scientist who was looking for a more effective way to organise the information and scientific papers they were producing and referencing. Sir Tim Berners Lee drew up some ideas and implemented some code, little did he know at the time (1989) the sort of information revolution he had unlocked.

At the visitors’ centre, that first webserver, together with a copy of the original plan, is now displayed in its own little glass box, alongside testaments of other great discoveries such as the Higgs Boson, and rightly so. While the exact impact of the Higgs is yet unknown, we certainly can assess the change that the world wide web has brought, becoming almost synonymous with the Internet.

The first world's first web server at CERN - see the note on the back!

I also couldn’t help noticing the first traces of Internet governance, as posted on the side of the machine is little note warning people not to turn it off. Yes, back in 1989 the World Wide Web actually had a physical switch. We have come a long way from that, removing all the single points of failure and creating a system that to a large extend just keeps going – there is no way to switch it off.

The Internet’s Big Bang occurred here in Geneva in 1989 and created an ever-expanding digital world. Now close to 30 years later, we are back in town, discussing how to govern this world. It’s good to look back at how it all started and appreciate the value we have created. Better yet, let’s embrace all that energy and move forward, realising that trying to stop or halt that movement is likely not possible. It’s taken a lot of time and energy, but the days of that single off button are long gone, let’s stop trying to create a new one.

- Marco

Day 1: China in a Talking Shop

OK, that was a pretty tortured title… but one of the sessions earlier this morning presented a fascinating illustration of the how differently Internet governance is viewed by different stakeholder groups and in different countries. "China’s Internet Policy to Shape the Digital Future” featured numerous speakers highlighting both the growth and strength of the Chinese Internet industry, and the Chinese government’s strong belief in the need for strong regulation of the Internet.

The need to “administer all, supervise all, manage the Internet according to the law” faced some strong push-back from other participants, particularly those concerned with the limited civil society participation in Chinese governance discussions. The response to these concerns, highlighting the annual World Internet Conference (also known as Wuzhen Summit), a potential competitor with the IGF itself, was telling, with the conference described as a “multiparty” event (perhaps as opposed to “multistakeholder”?) by moderator, Ms. Bin Bin Wei, of the Cyberspace Administration of China.

An interesting take on the age-old Internet governance battles over the need for regulation… and on the growing presence of Chinese stakeholders in Internet governance discussions.

- Chris

Day 1: Artificial Intelligence and Inclusion

We’ve all been hearing the buzzword “artificial intelligence” (AI) a lot more lately. This year, the IGF also included this word in several sessions, including one with fruitful discussions called “AI & Inclusion”, which was an extension of the Global Symposium on AI & Inclusion held in November. As I had attended the symposium, I went into the session with a pretty good understanding of the current debates on AI.

The session centered around the idea of inclusion, which can be difficult because it is often very political, with people attempting to bring others over to their side. One of the big questions was what to include and how, which led to a discussion on how to increase participation from youth. After this, participants started talking about the importance of young people’s role in AI and how it is a necessity to make sure they become more skilled.

The solutions and action points stated in the session are as follows:

- AI related issues should be looked at as an ecosystem-level problem and all stakeholders from individuals to states have duties.

- AI related work needs to be interdisciplinary to have a 360-degree view.

- To deal with the human bias problem, one should remember that there are “social solutions to technical problems and technical solutions to social problems”.

- Timing and speed matter in the race to clear the uncertainties around how research and policies impact AI, which will shape future AI practices.

The closing remarks of the session were: Do non-technical people have to learn coding or do engineers and coders have to learn ethics? Stress the artificial in AI, not the intelligence; Even though researchers are working on AI in a timely manner, it is very different than the former disruptive technologies in that every single one of us have to have literacy in AI, whether we like it or not.

- Elif

Day 1: Seed Alliance Awards Recipients

The winners of this year's Seed Alliance awards hail from Brazil, Cuba, Cameroon, Indonesia, Rwanda and Uganda

- Gergana

Day 1: How Multistakeholder Cooperation Can Address Internet Disruptions

An interactive panel session at the beginning of the first day of IGF 2017 focused on multistakeholder cooperation for improving the health of the Internet. Internet shutdowns result in severe social and economic costs to societies (one speaker suggested a five-day shutdown might cost as much as 3-4% of a country’s GDP). Often governments are not well informed about the consequences and aren’t aware of different routes to achieve a certain objective. The technical community’s role in educating government about what is possible was recognised.

Vint Cerf: “Data integrity, making sure the data isn’t altered, is just as important as its ability to cross borders and be accessible."

Encryption can save lives. But encryption regulation is unclear and in some cases contradictory or impossible to follow. Panelists urged government to put their money where their mouth is and support encryption developers. In addition, the panel agreed that governments should not keep vulnerabilities secret.

Panelists discussed that data should cross borders freely, and be protected both during transfer and at rest. The session finished with the common agreement that keeping the Internet open should be a fundamental goal for all norms, soft laws and regulations.

- Gergana

Day 0: On Identifiers: "Hey, why are you wearing a government badge?"

It's common for people at conferences to look at badges to figure out who you are or who you're working with, and the IGF is no different. However at UN events such as the IGF, badges have another important feature: the colour on the badge designates which stakeholder group you are with. For the IGF itself, this is not really important as we are all equals, but other conferences might use this coding system to separate government, members and observers.

So, what went wrong here? Absolutely nothing, but the colour picked for “technical community” just happens to be the same as “regular” government badges - both are green. It's not a big issue and it gains us absolutely nothing extra, but this simple example shows how important it is to use identifiers which are unambiguous and unique to avoid confusion.

And we wrap-up Day 0 with a discussion on exactly this: identifiers on the Internet. A topic that we will revisit a few more times before the week is done, for instance during tomorrow’s workshop “The Future of Internet Identifiers” in which I will be speaking.

What we need to get clear, and I hope to contribute to this discussion, is what we expect from the different identifiers - whether they are “traditional” DNS labels or IP addresses, which identify a point in the network. The questions remain the same: what do you want the identifier to do? From there we can hopefully identify an existing system that fits these requirements. And where we can't, let’s discuss whether we can adapt an existing system to match those needs and deliver to expectations.

The Internet is changing rapidly, some identifiers are getting scarce. We need to look at those and figure out where to go next, but maybe adopting an existing system is easier, faster and quicker than building it from scratch. We did that, 20 odd years ago with IPv6, and we have since learned how slow some changes can be.

Lastly, I am not convinced we can ever capture the multi-facetted and multi-layered world of the Internet in a single identifier system. Certainly not without also importing all of the current shortcomings. Crucial to the IGF is the Tunis Agenda where it describes the multi-stakeholder model as “…each in their respective roles”. Maybe we need to apply this principal also to vast space of names, numbers, addresses and identifiers.

- Marco

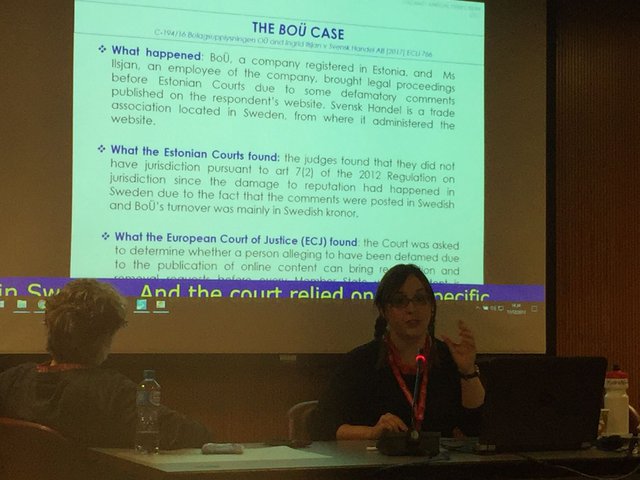

Day 0: GigaNet (continued)

The second half of the Giganet symposium started with a presentation on the diverse uses of the concept of sovereignty – from the capacity to innovate or engage in technological development, to the control of data flows at the individual, collective and state level. The next talk focused on access-based jurisdiction, which is when countries exercise control over content that is accessible in their territory, even if this content is uploaded or hosted in another country. The presenter outlined the challenges for freedom of expression that such a jurisdictional approach poses.

Former RACI attendee, Sara Solmone from the University of East London, presenting on the dangers of access-based jurisdiction

After this, two talks looked into the process of intermediaries and other private Internet companies gaining greater authority for norm-setting in cyberspace, and also responsibility for cybersecurity and appropriate behaviour online. The next presentation discussed the recent “opportunistic turn” in encryption standardisation, which is happening in a very informal way. The speaker gave the example of the Signal protocol which appeals to a large pool of users and is being adopted as a de facto standard.

The day ended with three talks exploring the participation of non-governmental actors in Internet governance in Brazil and China. The very low turnover rate in representative bodies is a worrying tendency, which might be due to the rules being skewed in favour of incumbents or the resources that being a member requires. On the other hand, handing over some government functions to non-state actors or civil society could be a good response to slow regulation. Despite its status as a latecomer to the field of Internet governance, China has always been trying to develop a consensus among developing countries in order to be stronger and more convincing at the international level.

- Gergana

Day 0: Auditing Socially-Relevant Algorithms

As a RIPE Fellow who is interested in algorithms and algorithmic decision-making, the session on “Data donation: auditing socially relevant algorithms” was interesting in a lot of ways.

Algorithm Watch, the host of the session, is a non-profit initiative to shed light on algorithmic decision-making processes with social relevance. The organization collected data for a project called Datenspende that asked whether people saw the same Google search results as their colleagues, particularly during the 2017 German Bundestag election.

To do this, they designed a plug-in that was downloaded by users to detect how personalized their Google search results were through a crowdsourcing method. The plug-in searched for 16 words (e.g. Angela Merkel, Martin Schulz, CDU, SPD etc.) to see whether the results differed for users as the German Bundestag election was taking place. Before Algorithm Watch started the project, they asked Google how personalized users’ search results are, and received the ambiguous answer of “2%.”

Algorithm Watch found that personalization is made almost exclusively through geographic location. While a politician’s name gave the same results in the Germany and the US, a political party’s name could present different results, as the same acronym had different popular meanings in the US and Germany. Algorithm Watch also found a slightly larger difference between results for users who logged into their Google Account and those who didn’t, most likely because of the data available to Google.

The research concluded that people are much more influenced by their region. Algorithm Watch wanted to convey the message that as a society, it’s important to develop methods to audit algorithmic decision-making processes that affect us, and by devising the right methods, it is feasible to see these processes transparently. The session shed light on why we need to understand how the algorithms we deal with daily work, and showed how we can attempt to understand them better.

- Elif

Day 0: IGF Newcomers and Youth

I started Day 0 with a capacity-building session for youth and newcomers. While these two groups aren't necessarily the same, they do share one important feature in that they are both somewhat new to the Internet and Internet Governance.

A full house at the Introductory Session for IGF Newcomers and Youth

To effectively participate in these discussions, it's important that we can all work from the same information baseline. While a detailed knowledge of all the technical aspects and protocols isn't required, understanding some of the basics on important systems like the DNS, or the institutions involved in managing the DNS and Internet numbering systems is very important.

Knowing the limits in these systems helps to identify what is possible. Also vitally important is to know how changes are made and where to go to make them. With the IGF being a non-decision making body, it is a great place to have a dialogue about how we can shape the future. But we should keep in mind that somewhere down the line, when it involves changes, a decision has to be made. And policy fora such as the RIRs, ICANN and standards developments organisations such as the IETF and IEEE cannot make these decisions without input from the various stakeholders.

It was great to see the room full of young people, motivated to make change. Hopefully we'll see them soon at a meeting of their regional RIR meeting or their favourite standards body.

- Marco

Day 0: GigaNet

The RIPE NCC has been actively supporting the participation of academics at our various meetings, through programs such as the RIPE Academic Cooperation Initiative and our engagement with universities. So, it's no surprise that we attended the Global Internet Governance Academic Network (GigaNet)’s annual symposium.

The agenda started with presentations on the various technological standards to support language diversity on the Internet, the interoperability challenges that a multilingual Internet is posing, the hurdles minority communities face to get their languages recognised and to get internationalised domain names (IDNs) and the often quite abrasive discourse within the Generic Names Supporting Organisation (GNSO). This was followed by a discussion of the divide within civil society concerning the meaning of terms such as inclusiveness, accountability and transparency in trade policy issues. Trade and development civil society organisations (CSOs) are more cautious about corporate culture than the Internet CSOs who favour more transparent, multistakeholder participation.

Next, we discussed the dichotomy between the top-down and bottom-up approaches to norm setting and how states, who are used to having a leading role in governance, are slowly trying to take back power in the Internet governance arena. This fed nicely to the next presentation addressing conflicts between governments and private corporations about control over online personal data access.

Finishing on a positive note before lunch, we discussed how organising in groups and collectives when challenging the balance of power, rather than sending individual requests, is much more successful and has led to agencies and authorities actually changing their practices and increasing transparency and user control.

- Gergana

Day 0: Dynamic Coalition on Schools of Internet Governance

A chilly Sunday morning and the first sessions of the IGF 2017 “Day 0” are kicking off for the brave/foolhardy attendees…

One of the first sessions is dedicated to the formation of a Dynamic Coalition on Internet governance schools, an issue in which we at the RIPE NCC have quite a strong interest. A Dynamic Coalition (DC) is a formation within the IGF structure that facilitates practical work on a specific issue throughout the year (not simply at the IGF events), and the focus for this new DC would be on sharing experience from various Internet governance schools to develop best practices and common resources. See the Initial Statement for more information.

For the RIPE NCC, we’ve been supportive of Internet governance schools for the past decade, including the European Summer School on Internet Governance, the Middle East and Adjoining Countries School on Internet Governance (MEAC-SIG) and more recently the Balkan School on Internet Governance. RIPE NCC contributes to the education of current and future Internet governance participants (and particularly provides specific technical perspective on IP addressing issues) as part of our broader efforts to ensure that Internet governance discussions reflect the realities of the Internet architecture and industry.

It was clear from the session that the proliferation of these events is a global phenomenon, and one of the key challenges is how to contribute to so many events. One of the strategies that we at the RIPE NCC have been looking at is developing our ability to contribute remotely, either through live streaming or pre-recorded content, and making sure that the content itself is designed to take best advantage of that format. Hopefully the work we do in this area will be of benefit to others who are building Internet governance schools around the world.

- Chris

Comments 3

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Daniel Karrenberg •

Who is Vint Cert? ;-)

Mirjam Kühne •

Oops... fixed :-)

Chris Buckridge •

Glad to see you're reading, Daniel! :) I'd just assumed Vint had his own Computer Emergency Response Team now!