This weekend the NANOG mailing list was abuzz about an F-root IPv6 route leak, that resulted in the F-root DNS server instance located in Bejing, China, being queried from outside of China. This normally doesn't happen, as this instance is advertised with the BGP attribute NO_EXPORT, which means it should not be visible outside of immediate neighbor Autonomous Systems (ASes). We looked at the DNSMON data about this event, and found that 5 out of 29 IPv6-enabled DNSMON monitors saw the Bejing F-root instance. We also found there was an earlier leak on 29-30 September.

An email thread on the NANOG mailing list this weekend alerted the network operator community to a specific route leak of the IPv6 prefix for the F-root DNS server. This leak resulted in parts of the global traffic to the F-root server being directed to a specific instance of this server in Bejing, China. On the mailing list there was a strong sentiment that this is bad because it means that some queries to and responses from F-root will pass through the Great Firewall of China (GFC), which can rewrite queries. People are afraid that the GFC would rewrite DNS queries for clients outside of China, which some refer to as exporting censorship. An analysis by Andree Toonk on his BGPMon blog has the BGP view of this event, and shows that a number of providers had their best IPv6 path to the F-root instance in China from approximately 18:00 (UTC), 1 Oct 2011 to 19:00 (UTC), 2 Oct 2011.

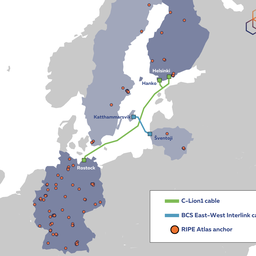

We looked at this event from DNSMON, which is a system run by the RIPE NCC that monitors the global DNS infrastructure, by querying it at high fidelity from a network of vantage points around the world .

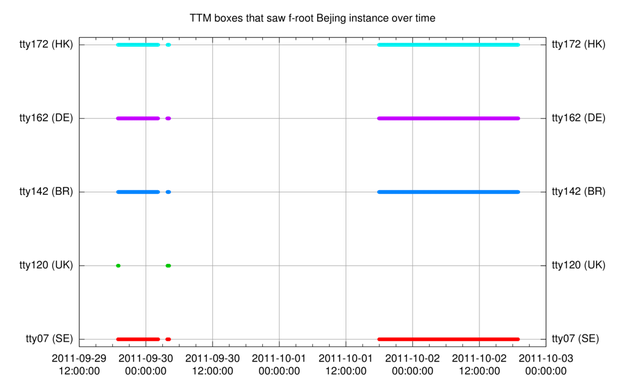

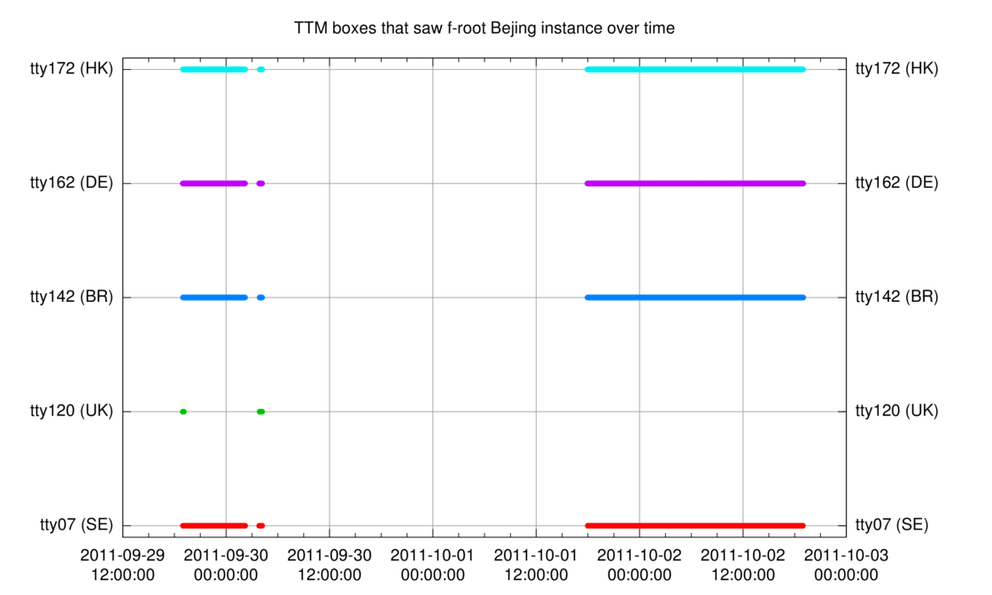

DNSMON captures the hostname of the root-servers it queries by means of " magic" hostname.bind-queries . These queries are done and recorded approximately every minute. We looked through the August, September and October data up until today to see if we could find the hostnames of the Bejing F-root Bejing instance ( pek2a and pek2b ). The results are plotted in Figure 1.

Out of a total of 29 IPv6-enabled DNSMON locations, we see five monitoring points that saw the Bejing F-root instances. These five are physically located in Stockholm (SE), London (GB), Sao Paulo (BR), Hamburg (DE) and Hong Kong (HK).

Four out of five monitoring points had the same pattern of seeing the Bejing instance. The pattern consists of three separate intervals where the Bejing instance was seen. There was a good write-up on the BGPMon blog regarding the third interval; as far as we are aware, the first two had not been previously detected. The first interval was from 18:57:51 (UTC), 29 September to 02:13:14 (UTC), 30 September. The second interval, which was very short, lasted from 03:49:55 to 04:11:24 (UTC) on 30 September.

The odd one out is tt120 , which only saw the Bejing F-root instance for approximately five minutes at the beginning of the first interval, where the other vantage points that saw this effect observed it for longer periods. It did see the Bejing F-root instance during the full second interval. It didn't see the Bejing F-root instance at all in the third interval. We don't know currently how or why tt120 is different from the other four. If you have information you can share about this, please comment below.

We looked back as far as 1 August 2011, which is as far as we have detailed data available. Besides from what is shown in Figure 1, we didn't find these or other DNSMON boxes seeing the Bejing F-root instance.

Conclusions

As already mentioned elsewhere, DNSSEC makes it impossible for anyone to change in-flight DNS data between DNS servers. So implementing DNSSEC can eliminate concerns about the GFC in this respect.

Stephane Bortzmeyer has also stated, comparing the IPv6 Internet to the IPv4 Internet: "Because the IPv6 Internet [topology currently] is much more flat, a very small change can dramatically alter the catchment of an anycast instance." The effects of route leaks in IPv6, as in this F-root Bejing case, should become less pronounced as the IPv6 network continues to mature.

The RIPE NCC could implement a monitoring system in DNSMON to warn both DNSMON hosts and root-server operators if anycast nodes that should be local show up in places where people don't want or expect them. If you think this is a good idea, please let us know.

If anybody has any corroborating data, please comment below. We're also still looking into this, so watch this space.

Comments 4

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Anonymous •

...The RIPE NCC could implement a monitoring system in DNSMON to warn both DNSMON hosts and root-server operators if anycast nodes that should be local, show up in places were people don't want or expect them. If you think this is a good idea, please let us know....<br /><br />Yes, additional points of view and triggers will always be a help in keeping these sort of service stable for everyone.

Anonymous •

It is a good idea to use the existing DNSMON infrastructure. I think that would be excellent use of RIPE NCC's resources.<br /><br />I am very surprised that organizations are wildly deploying pieces of critical internet infrastructure in multiple locations around the world, but are not simultaneously monitoring how their anycasted service instances are visible around the world. I am happy to see that RIPE NCC actually had some historical data on this and was able to spot that the DNS queries had been diverted to Beijing already before.<br /><br />It was just a funny coincidence that I happened to notice this and alerted the people this time (and this was more than 24 hours after it started).<br /><br />This was not the first time this has happened. Shouldn't we already learn something? Combined with things like what happened to DigiNotar, this becomes scary. DNS hijack makes using fraudulent certificates for massive or targeted MITM attacks trivial.<br />

Anonymous •

From my personal vantage point, this is/was just another incident with this beast, be that v4 or v6 is just a minor variation imho (ignoring the GFC stuff for a moment).<br /><br />As I reported to the DNS-WG in Rome last year ("The dancing F-Root" presentation) my Atlas probe in Vienna was seeing an anycast instance of F in Venezuela (at a few hundred ms RTT) instead of a close one (at a couple of 10 ms RTT).<br /><br />This is not picking on the operators of F, but rather wondering whether the 'net has orhad similar issues with other anycast clouds that use similar approaches to "manage" (or rather prevent) visibility of their nodes, and that went unnoticed.

Anonymous •

We are in the process of upgrading RIPE Atlas probes to a firmware that can do many more measurements, both more simultaneous measurements and more kinds of measurements. Some of the probes that were upgraded early did record answers from F in Beijing over IPv6. One of the is at my house and uses a DSL connection from xs4all with native IPV6 where this started at Sat Oct 1 18:01:08 UTC and ended at Sun Oct 2 19:01:11 UTC. <br /><br />Here are also two traceroutes from that probe. Before<br /><br />RESULT 6004 ongoing 1317491366 traceroute to 2001:500:2f::f (2001:500:2f::f), 32 hops max, 16 byte packets <br /> 1 2001:980:3500:1:224:feff:fe19:b61c 1.526 ms 1.614 ms 1.669 ms <br /> 2 2001:888:0:4601::1 10.604 ms 10.119 ms 10.064 ms <br /> 3 2001:888:0:4603::2 9.436 ms 9.405 ms 9.325 ms <br /> 4 2001:888:1:4005::1 9.723 ms 10.076 ms 10.270 ms <br /> 5 2001:7f8:1::a500:6939:1 10.061 ms 18.985 ms 9.942 ms <br /> 6 2001:470:0:3f::1 18.148 ms 18.081 ms 20.498 ms <br /> 7 2001:470:0:3e::1 88.401 ms 94.233 ms 85.504 ms <br /> 8 2001:470:0:1c6::2 103.191 ms 109.736 ms 102.862 ms <br /> 9 2001:470:1:34::2 123.513 ms 123.706 ms 123.376 ms <br /> 10 2001:500:2f::f 123.175 ms 123.076 ms 123.218 ms <br /><br />and during:<br /><br />RESULT 6004 ongoing 1317493151 traceroute to 2001:500:2f::f (2001:500:2f::f), 32 hops max, 16 byte packets<br /> 1 2001:980:3500:1:224:feff:fe19:b61c 1.517 ms 1.442 ms 1.679 ms <br /> 2 2001:888:0:4601::1 10.495 ms 10.041 ms 14.487 ms<br /> 3 2001:888:0:4603::2 10.117 ms 9.625 ms 9.507 ms<br /> 4 2001:888:1:4005::1 10.919 ms 10.569 ms 9.855 ms<br /> 5 2001:7f8:1::a500:6939:1 10.429 ms 10.547 ms 18.925 ms <br /> 6 2001:470:0:3f::1 28.205 ms 19.843 ms 23.405 ms<br /> 7 2001:470:0:3e::1 91.845 ms 94.497 ms 96.812 ms<br /> 8 2001:470:0:10e::1 147.166 ms 146.861 ms 146.905 ms<br /> 9 2001:470:0:16b::2 306.184 ms 305.656 ms 305.636 ms<br /> 10 2001:7fa:0:1::ca28:a1be 314.605 ms 307.503 ms 309.400 ms<br /> 11 2001:252:0:101::1 356.118 ms 366.003 ms 356.810 ms<br /> 12 2001:252:0:1::4 352.575 ms 353.977 ms 352.047 ms<br /> 13 2001:e18:10cc:101:102::1 358.226 ms 353.353 ms 361.451 ms<br /> 14 2001:e18:10cc:101:106::2 359.442 ms 361.659 ms 361.094 ms<br /> 15 2001:cc0:1fff::2 358.191 ms 362.187 ms 367.455 ms<br /> 16 2001:dc7:ff:1005::1:6 361.919 ms 355.630 ms 394.090 ms<br /> 17 2001:500:2f::f 356.187 ms 358.515 ms 352.517 ms <br /><br /><br />So once all RIPE Atlas Probes are upgraded to the new firmware (4.260) we will have even more vantage points to monitor this from. You can follow the firware upgrade process here:<br /><a href="http://atlas.ripe.net/dynamic/stats/stats.probe_versions.png" rel="nofollow">http://atlas.ripe.net/[…]/stats.probe_versions.png</a>