In Part 1 and Part 2 of this article we showed various statistics on IPv6 measurements of web clients and caching resolvers. In this part we explain how the measurements were done.

In Part 1 and Part 2 of this article we showed various statistics on IPv6 measurements of web clients and caching resolvers. In this part we explain how the measurements were done.

It is relatively easy to measure IPv6 capabilities of end-users browsing: you make them fetch content from an IPv4-only, an IPv6-only and a dual stack web-server, and look at the log files of the web-server(s) to see what got fetched and, in the dual stack case, whether IPv4 or IPv6 was used. In our measurements these are called the h -tests (for HTTP), specifically h4 , h6 , and hb ('b' for both IPv4 and IPv6). This idea is also the basis of client IPv6 capability measurement done by, amongst others, Google , Sander Steffann ( http://ipv6test.max.nl ), TNO , and Tore Anderson (Redpill Linpro).

To measure the caching DNS resolver in use by an end-user, a unique hostname for the content to be fetched can be generated, so one can force the caching DNS resolver to do a lookup to the authoritative name server for that specific hostname. By having different types of delegation paths for the unique hostname that is being looked up, one can measure the IPv6 capabilities of the DNS resolver(s) in use by a specific end-user. In our case, we tested delegation paths having an IPv4-only component, an IPv6-only component, and a dual stack component. We called this the d-tests (for DNS): d4 , d6 and db . In our case, we control both the authoritative name server (AuthDNS) and the name server that delegates to the authoritative name server (DelDNS). For all tests, a caching resolver iteratively followed the delegation path until it reached the DelDNS server. In the d4 case, this server responds to the caching resolver with an IPv4-only referral to the AuthDNS server (more specifically the hostname returned in the name server record only has an A record). In the d6 case this server responds with an IPv6-only referral to the AuthDNS server, so if the caching resolver is not IPv6 capable it is not able to resolve the name, which results in failure to resolve the hostname the web client wanted resolved.

In our measurement, we combined the HTTP and DNS tests described above. We used four of the possible nine (3x3) combinations of HTTP and DNS tests:

* h4.d4 : This is a baseline measurement, and should succeed for all clients and resolvers

* h4.d6 : This measures capability of the caching resolver to reach the IPv6 Internet

* h6.d4 : This measures capability of the end-user system to reach the IPv6 Internet

* hb.db : This measures protocol-preference for both the end-user system and the caching resolver, since both IPv4 and IPv6 are offered.

The measurement is implemented as a piece of JavaScript served from a volunteering website. If somebody visits this volunteering website with a JavaScript-enabled browser, the JavaScript will get fetched and executed. When it executes, it generates a unique ID and uses this to insert four image objects into the DOM of the HTML page of the form:

<uniqueID>.h4.d6.test.example.com/image.png?<uniqueID>.h4.d6

<uniqueID>.h6.d4.test.example.com/image.png?<uniqueID>.h6.d4

<uniqueID>.hb.db.test.example.com/image.png?<uniqueID>.hb.db

<uniqueID>.h4.d4.test.example.com/image.png?<uniqueID>.h4.d4

which the web browser will then try to fetch. We collect web-logs and DNS query-logs on the web server and authoritative name server that are part of our measurement infrastructure. This allows us to correlate the submeasurements with the same unique ID to a single measurement of the IPv4 and IPv6 capabilities of the end-user system and the caching resolver(s) it used. The JavaScript will only trigger the measurements once per day per site it is served from, so it won't overmeasure web clients that visit the same page multiple times per day. Since 5 March 2010, the baseline measurement ( h4.d4 ) is executed five seconds after the other measurements. This is to make sure that users navigating away from the page where the JavaScript is served from don't skew the measurement results. All measurements where the h4.d4 test doesn't succeed are discarded. We see that about 1% of dual-stack sub-measurements ( hb.db ) fail. Others have seen failure rates of 0.11% ( Tore Anderson ) to 0.13% ( Sander Steffann / ipv6test.max.nl ). These failure rates led us to conclude that this setup is not suitable for testing dual-stack failures. We then decided to discard measurements with failed dual-stack sub-measurements too, after making sure that this didn't skew our measurement results significantly (see footnote [1] for details). What causes this loss is still an open question ( if you have ideas on this, let us know ).

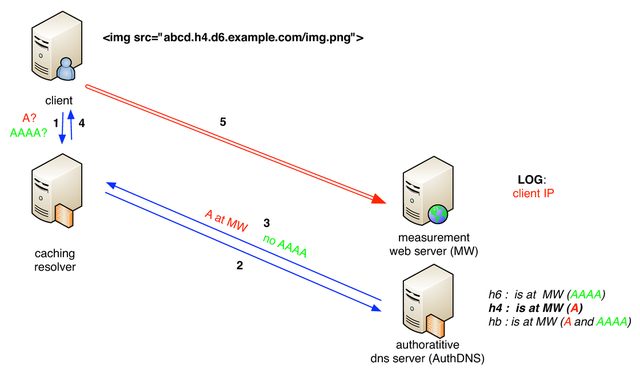

Figure 1 shows an example of the HTTP measurement that tests web client capabilities. In this case an IPv4-only measurement is done ( h4 ). The web client asks its resolver for A and AAAA records (1), after following the delegation chain, the resolver asks the authoritative DNS (2). In this case the hostname contains the h4 label, so the authoritative DNS server returns an A record and no AAAA record (3). The client receives the result of the DNS resolution process (4) and connects via HTTP to the measurement web server over IPv4 (5). The measurement web server logs the client connection.

Figure 1: HTTP IPv4 test

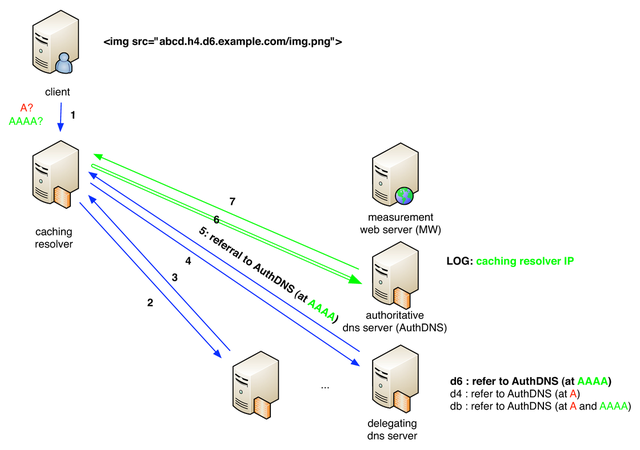

Figure 2 shows an example of measuring the caching DNS resolver, in this case measuring its IPv6 capability ( d6 ). The web client asks its resolver for A and AAAA records (1), after following the delegation chain (2,3), the caching resolver queries the last delegating DNS server (4). In this case the hostname being requested contains the d6 label, so the delegating DNS server will respond with a referral to the authoritative DNS server by a name that it only provides an IPv6 address for (5). Only if the caching DNS resolver is IPv6 capable, it will be able to query the authoritative DNS server over IPv6 (6) and get a response (7). Step (7) corresponds to step (4) in figure 1, and the response in this step depends on the value of the h -test ( h4, h6, or hb ).

Figure 2: DNS IPv6 test

The JavaScript we used is here , with code heavily inspired by Sander Steffann's excellent ipv6test.

Footnotes:

[1] Since the 1% loss on dual-stack measurements was not in agreement with the aforementioned measurements and could skew our measurements significantly we took a closer look at these measurements. The group of measurements that had this deficiency was not significantly different from the total group of measurements when looking at OS or browser used as identified by the user-agent strings in the logs we collect, which builds confidence that the population we observe the dual-stack measurement losses in is not different from the total population we measure. On top of that, these measurements were done using a script that is hosted on www.ripe.net , which also is dual-stacked, so chances of us measuring loss due to dual-stacking should be significantly less than the 0.1% that other studies reported. We looked at 525 cases where we had multiple measurements on multiple days from the same IPv4 address and user-agent where the dual stack sub-measurement failed at least once. In 85% of these cases successful dual stack submeasurements were observed. The other 15% may be indicative of persistent dual-stack connectivity problems, and on our total measurement population that would make 0.15% with dual-stack connectivity problems, which is in the same ballpark as the two other measurement studies mentioned before. We have not been able to identify the exact source of the intermittent loss of measurements (HTTP proxies? ) but decided this measurement was not well suited for identifying loss of function and discarded all measurements where the dual-stack sub-measurement didn't succeed. (If you have suggestions on what could case this loss, again please let us know).

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.