The prefix 128.0/16 is filtered in Juniper devices up to and including JUNOS software version 11.1. We looked at three ways to get a rough estimate on how much filtering of 128.0/16 is going on on the Internet.

Some IP address blocks are more special then others; RFCs contain a lot of exception cases, and worse, these exceptions sometimes come and go. The address block 128.0/16 is one of these cases. It was initially reserved by the IANA, but in RFC3330 (September 2002) this block was let go to the free pool. From RFC3330:

128.0.0.0/16 - This block, corresponding to the numerically lowest of the former Class B addresses, was initially and is still reserved by the IANA. Given the present classless nature of the IP address space, the basis for the reservation no longer applies and addresses in this block are subject to future allocation to a Regional Internet Registry for assignment in the normal manner.

This was further obsoleted by RFC 5735 (January 2010), which doesn't mention 128.0/16 at all.

As became apparent recently, in this thread on the nsp-juniper mailinglist, Juniper devices running JUNOS versions up to 11.1 still have 128.0/16 listed as a martian by default, which means any device running JUNOS with this default will ignore routing information about this address block. This thread also contains pointers on how to work around or fix the problem.

We wanted to know what the scale of the problem was so we assessed this in three different ways.

1. BGP debogon

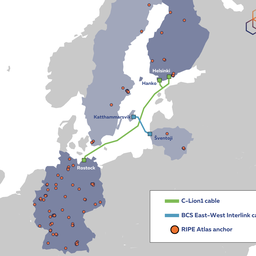

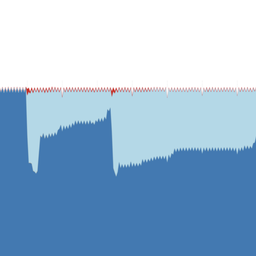

Our BGP debogon effort shows that in 20% of ASes that were measured were filtering a more specific that we announed out of 128.0/16. The exact measurement methodology is described at the bottom of the our debogon daily report .

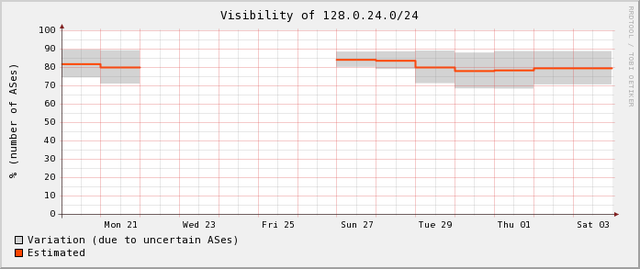

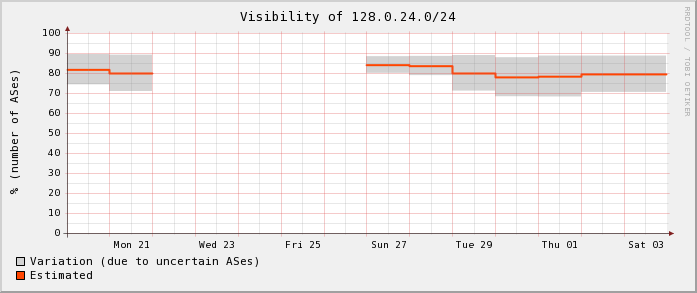

Figure 1: Debogon results for 128.0.24.0/24. Roughly 20% of ASes were suspected of filtering this prefix.

If we look at RIS peers we see that 80 out of 97 see the /21 we announce out of the 128.0/16 block, so 18% of RIS peers doesn't see this prefix at this moment.

2. Active measurement

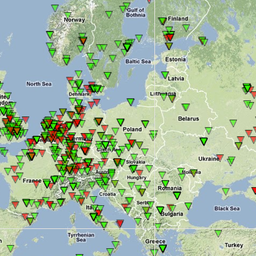

Since we have User Defined Measurements for RIPE Atlas in beta-testing now, we started a measurement to ping the target 128.0.0.1 from 30 randomly selected probes, of which 12 (40%) could not reach this address. We will do measurements from a larger set of probes, and expect to publish more on that soon.

3. Passive measurement

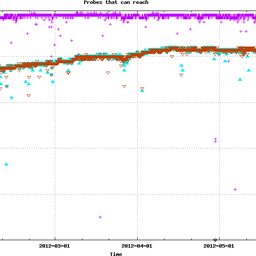

With the advent of the Conficker worm , which scans random IP addresses at relatively high frequency from a relatively large population of end-hosts, the Internet has become a place with a lot of background radiation. By looking at the amount of scanning that is collected on a darknet (the UCSD network telescope) and comparing that to what we receive on parts of 128.0/16, it is possible to infer what source IP addresses on the Internet are able to reach the one darknet, but not 128.0/16. In this case, devices running JUNOS 11.1 or earlier are a likely cause, if they are on the critical path between the source of scanning and 128.0/16.

To make sure that the false positive rate is as low as possible, we only considered the top 100 prefixes (by number of packets per hour) that were seen on the network telescope. For the address space we announce from 128.0/16 we calculated the expected packet rate to be at least 13 packets per hour (see footnote 1) for the prefix that received the least traffic.

We took data for four hours, and in these hours we saw 35% to 50% of the top 100 prefixes that send scanning traffic to the larger darknet, don't send any packet to the address space we announce out of 128.0/16, which hints at 128.0/16 being unreachable from these networks.

We spot-checked some large prefixes out of the ones that we didn't receive scanning traffic from, and for all of these we found that traceroutes sourced from 128.0.0.1 stop right before entering the ASes announcing these prefixes.

Conclusion

Our current rough estimates, based on small sample sizes, show that 20% to 50% of ASes/prefixes have 128.0/16 filtered. While we expect 50% to be on the high side, our estimates show the scale of the problem. Prefixes from 128.0/16 are currently not usable if one wants to have global Internet visibility in BGP. This needs to come down for this prefix to be usable on the global Internet.

DIY (Do It Yourself)

It's easy to see if the network you are currently in is affected (assuming no firewall is blocking all ICMP traffic):

ping 128.0.0.1

If you want to see the reverse (i.e. ping from 128.0.0.1), the RIS debogon project has an interface so you can traceroute/ping from prefixes that are begin debogonised:

http://www.ris.ripe.net/cgi-bin/debogon.cgi

If you see differences between traceroutes from 128.0.0.1 and (for instance) 193.0.0.0, these are likely caused by Junipers obsolete martian-filtering.

Footnote

[1] For the top 100 prefixes we looked at, packets per hour ranged from 54k to 422k. The address space in 128.0/16 we captured traffic for is a factor 8k smaller then the large darknet. We know scanning in fact is not random , since it only uses the first half of octets two and four. The second octet bias causes only half of the darknet will receive this scanning traffic, while all of the 128.0/16 block will receive it. The bias in the fourth octet is the same. This makes the difference between the sizes of the large darknet and the announced parts of 128.0/16 actually be a factor 4k. Taking the smallest amount of traffic per hour to the top 100 prefixes we saw in the large darknet (54k packets), makes the expected value we would see on the address space we announce from 128.0/16 be 13 packets per hour.

Comments 4

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Nick Hilliard •

It would be really interesting to see what sort of visibility the Atlas probes had into the 128.0/16 filtering problem. Certainly the data would be skewed because the probe sites are self-selecting rather than truly random. However, a bunch of traceroutes would turn out useful data.

Emile Aben •

Hi Nick, We're working on Atlas and 128.0/16. Please watch this space :) We do collect traceroute outputs from the debogon-cgi, so we'll also likely have some data to analyze from that.

Nick Hilliard •

incidentally, i recently saw a graphviz visualisation of lots of separate traceroutes to a single point. This might be an interesting way of visualising bogon sinks, and it was certainly a lot easier to see connectivity loss via a graph like this rather than depending on the output of 50 traceroutes, one on top of the next. Graphing the connectivity tree by IP address created quite a lot of clutter, but perhaps it would be possible to graph the traceroute connectivity by intermediate ASN?

Emile Aben •

Hi Nick, This is indeed interesting stuff, and visualising traceroutes to a single point is something that is definitely on the agenda for Atlas. Your comment about IP vs AS-level traceroute is very true; multiple IP traceroutes in the same graph becomes a total mess very quickly. For AS-level traceroutes one could think about something BGPlay-like (http://bgplay.routeviews.org/) For traceroutes to 128.0/16 we're looking into last-reachable AS at the moment. Again: watch this space! :)