Managing ~600 infrastructure devices with a three-person team demands careful design choices. Based on experience in Central Asia, this article examines how standardisation, observability, and operational discipline support reliability at scale.

Running Internet infrastructure is rarely about building a perfect architecture, but rather about making the existing one work reliably. In many regions, especially outside traditional tech hubs, engineers operate complex production environments with surprisingly small teams and infrastructure that evolves as it grows. The data centre I manage is located in Central Asia, a place where this combination of infrastructure scale and small teams is not uncommon.

In this article, I share operational lessons from running a data environment that supports around 600 infrastructure devices across multiple network domains - Internet edge services, corporate infrastructure, VoIP interconnections, and service platforms - all managed by a small engineering team.

My goal isn't to present a textbook design, but to share practical, field-tested decisions that help maintain reliability and security even as the infrastructure continues to scale.

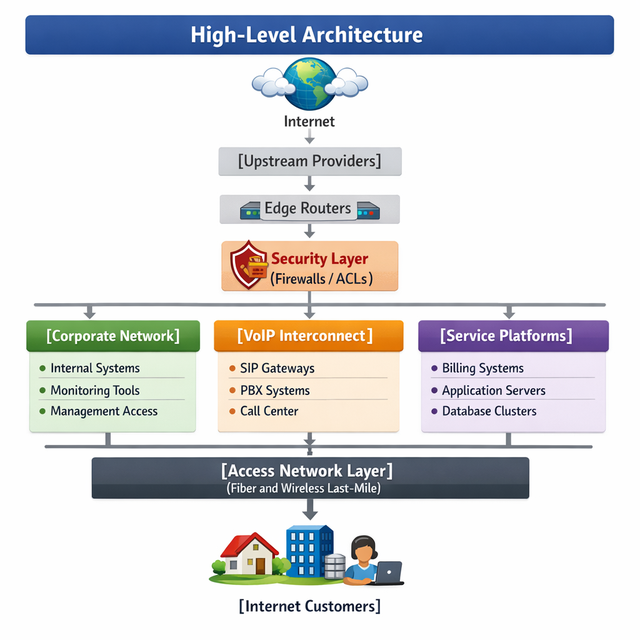

The data centre environment I manage acts as a critical integration point between multiple service layers. It supports several distinct operational domains:

Infrastructure

- Internet edge infrastructure connecting the network to upstream providers and partners

- Corporate IT infrastructure supporting internal systems

- Service platforms including billing systems and other internal services

- VoIP interconnections used for SIP connectivity with partners and enterprise customers

- Access networks providing connectivity to non-mobile customers via fibre and wireless last-mile technologies

While the mobile core network is managed by a separate infrastructure team, these domains all converge in the data centre, making its reliability critical to the entire operation.

Behind these services is a physical environment that has grown organically over time. Today, it includes:

- Virtualisation clusters running production workloads

- Fibre Channel storage systems for shared storage

- Network segmentation across multiple security domains

- Firewalls, monitoring tools, and supporting systems

In total, the environment comprises approximately 600 infrastructure devices - servers, network equipment, and storage systems - that all support both customer-facing services and internal infrastructure.

This infrastructure is operated by a team of three full-time engineers (FTEs). However, this number understates the real operational challenge. In practice, the team experiences constant turnover. Junior engineers join, train for a year, and often move to other opportunities. By the time an engineer becomes fully productive, they are frequently recruited by larger organisations. This means we're always operating with a mix of experience levels, and our infrastructure must be resilient not only to hardware failures, but to loss of institutional knowledge.

This reality - 600 devices, three FTEs, and continuous staff changes - has shaped our approach more than any technology choice. We design for consistency because we cannot afford systems that require deep, undocumented expertise to maintain.

Operational challenges

Running a data centre with three engineers means that every unplanned task has an immediate cost - if one person stops to fix a server, 33% of the team's capacity disappears. This reality shapes everything we do.

Scale vs team size

With 600 devices, traditional "hands-on" management is impossible. We learned early that without automation and careful process design, routine maintenance alone would consume the entire team.

Infrastructure growth

Like most environments, ours grew organically. New services were added as customers requested them. Over time, this created architectural fragmentation - different configurations, different vendors, different operational procedures for similar tasks.

Reliability expectations

Despite the team size, customer expectations haven't changed. The infrastructure must maintain the same availability as larger operations. This forces us to design for failure, not just for normal operation.

Security requirements

Security controls that make sense for a large security team can become operational burdens for a small one. We need segmentation and access control that works without requiring a dedicated security engineer to manage it.

Engineering strategies

Over time, we developed several approaches that help us maintain stability with limited staff.

Infrastructure standardisation

Early on, we had three different server models with three different management interfaces. Troubleshooting meant remembering which vendor did what. We standardised on a single hardware vendor and consistent OS images. Now, a problem in one server is likely the same in another, making the fix more predictable.

Lesson: Standardisation isn't about vendor preference, but rather about making the infrastructure predictable enough that one engineer can handle any device.

Segmentation and access control

We separated management traffic from production traffic completely. Management interfaces are only accessible from specific jump hosts, and changes require logged sessions. Instead of adding work, this reduced mistakes. When something breaks, we know it wasn't a config error from the wrong network.

Lesson: Good security for a small team is security that prevents accidents, not just attacks.

High availability design

We assume hardware will fail. Every critical service runs on at least two hosts. Shared storage is dual-controller. Power is dual-fed. This isn't about achieving five nines - it's about making sure that when something fails at 3 AM, it doesn't require an engineer to drive to the data centre.

Lesson: HA is a staffing strategy, not just a reliability feature.

Monitoring and observability

We built monitoring that alerts on symptoms, not causes. We don't want to know that "CPU is high" - we want to know that "response time is slow" so we can investigate. This reduces false alarms and ensures the three of us focus on real problems.

Lesson: With a small team, monitoring must filter noise aggressively.

Operational incident: When monitoring saved the day

It was a Tuesday afternoon when our storage latency graphs started climbing. Nothing dramatic - just a steady upward trend that crossed a threshold we'd set months earlier.

The alert didn't say "storage is down" or "server unreachable." It simply said: "average latency for VM disk operations exceeds 50ms for 15 minutes."

With three engineers, we don't have the luxury of ignoring gradual degradation, so we investigated immediately.

What we found was unexpected. Several application workloads had shifted their behaviour: more database writes, more logging, more I/O contention than we'd ever seen. The storage system itself was healthy, but it was working harder than it ever had in production.

Because we caught it early, we had time. We identified the noisy workloads, adjusted their resource allocations, and added more capacity to the affected cluster. No users noticed. No tickets were opened.

But if we hadn't had that alert? The next threshold was "storage offline."

What changed after this incident:

- We added more granular storage performance metrics to our monitoring

- We created weekly capacity reviews for storage and compute

- We adjusted alert thresholds based on real workload patterns, not vendor defaults

This experience reinforced a lesson we now apply everywhere: monitoring isn't about knowing when something breaks, but knowing before it breaks.

VoIP interconnection: A different kind of Internet traffic

Among the domains our data centre supports, the VoIP platform is perhaps the most demanding, not because it's complex, but because it's unforgiving. Web traffic can retransmit. Email can wait. But when a SIP packet carrying a voice call is delayed or dropped, someone on the call notices immediately.

For a team of three engineers, this creates a specific challenge: VoIP problems are real-time, and they're customer-facing. A storage issue might affect internal systems; a VoIP issue affects active phone calls.

How we maintain stability:

- Logical separation: VoIP infrastructure runs in its own network segment, isolated from bursty traffic like backups or large file transfers

- Dedicated monitoring: we track jitter, latency, and packet loss on VoIP paths, not just device availability

- Traffic prioritisation: QoS marks ensure that when the network is congested, voice traffic is preserved

- Simple SIP interconnects: we keep peering configurations as minimal as possible; complexity in VoIP is the enemy of stability

Lesson learned: With a small team, you can't afford to chase intermittent voice quality issues. You must design the network so that VoIP works predictably - and when it doesn't, you know exactly where to look.

Scaling infrastructure with a small team

When we had 200 devices, three engineers felt manageable. When we passed 400, we felt the strain. At 600 devices, we knew we couldn't operate the same way.

The infrastructure didn't grow because we planned it, but because the business grew. New services, new VoIP partners, expanded access networks. Each addition made sense in isolation. Together, they created complexity.

Here's what saved us from feeling overwhelmed.

Standardisation

We stopped treating each new server as a unique snowflake. Every new system now follows a template: same OS version, same management interface, same logging configuration. When something breaks, we don't have to learn how that particular server was built three years ago.

Clear service boundaries

We enforced separation between corporate systems, service platforms, and VoIP infrastructure. This means that when the billing system has a problem, the VoIP platform isn't affected. Incident isolation became faster because we knew which domain to look in.

Monitoring-driven operations

We don't check on systems - they check on themselves. Our monitoring platform is the first line of defence. If it doesn't alert, we assume everything is working. This frees us to work on improvements instead of manual health checks.

The result

600 devices still feels like a lot of work, but not impossible. The infrastructure scales so the team's effort doesn't have to.

If we designed it again

If the infrastructure were designed today with the benefit of hindsight, several improvements would likely be introduced:

- Deeper automation of infrastructure management - particularly for OS patching and configuration drift detection

- Improved observability with more granular telemetry and longer metric retention for capacity planning

- Increased separation between operational domains to further reduce blast radius during incidents

- Earlier investment in documentation - what seems obvious today becomes obscure in two years, especially with staff turnover

These aren't criticisms of past decisions. Every choice made sense at the time. But reflecting on what we'd do differently is part of how small teams improve.

Why this matters

Infrastructure stories from large technology companies are widely shared. However, much of the global Internet is operated in environments with very different constraints.

In many regions, small engineering teams manage large and complex infrastructure environments with limited resources and continuous operational pressure.

Sharing these experiences helps highlight practical approaches that may be useful for other operators facing similar challenges.

Conclusion

Operating a data centre with 600 devices, three engineers, and the constant reality of staff turnover calls for discipline.

Every decision - from hardware selection to monitoring configuration - has to be made with the same question: Does this make the team more efficient, or does it create more work?

I suspect our situation is not unique. Across emerging Internet regions, small teams are keeping large networks running. They don't have the luxury of dedicated automation engineers or 24/7 shift rotations. They have the same constraints we do, and they find solutions.

This article shared some of ours. Not because they're perfect, but because sharing operational experience makes the entire Internet more resilient.

If you're operating infrastructure with a small team, somewhere in an emerging region or anywhere else - your lessons matter too. The community needs them.

Editorial note: This article was written by a non-native English speaker with the help of AI tools to polish the language and improve readability. All insights and opinions presented are the author’s own.

Comments 0