A step-by-step guide to how to use the RIPE Atlas measurements infrastructure to characterize the world-wide performance of Anycast DNS services.

As part of founding a new Internet Exchange recently (Fremont Cabal Internet Exchange, Fremont, CA, USA), our team was interested in identifying possible networks to bring into the exchange. The obvious candidates are networks already in the same facility as the exchange, but anycast DNS servers are another attractive option for growing internet exchange membership since they often only need a 1U server or VM to be able to deploy into the facility, so hosting them for free as part of the exchange is viable.

Anycast DNS is where you pick one global IP address that all your users query against, and instead of tolerating the nuisance of the quite limited speed of light (lol) back to a single server, you create multiple identical copies of your server (even down to using the same IP address) and distribute them across the entire Internet. So identical that it really doesn't matter which server the query goes to; they all come back with the same answer anyways. This ability to have the same IP address in multiple places is made possible by dedicating a whole /24 IPv4 block and a /48 IPv6 block to the server's public facing address (these size subnets are driven by those being the smallest block of IP addresses generally accepted in the global BGP routing table). You then have the server itself advertise those two IP blocks into BGP itself, and as networks see the same advertisement from each of the distributed servers, they use the typical BGP best path selection process to select the "closest" server (where closest is unfortunately in the sense of network topology and business policy, not necessary linear distance or latency like you'd hope).

So this is great; anycast is used to make it seem like a single server is beating the speed of light with how fast it's able to answer queries from across the globe, and is a popular technique used by practically all of the root DNS servers, as well as many other DNS servers, be they authoritative DNS servers for specific zones or public recursive DNS resolvers like those provided by Google or CloudFlare.

So my challenge to myself has been to find any of these anycast networks who would plausibly be interested in tying into our IXP, and would benefit from an anycast node added in California.

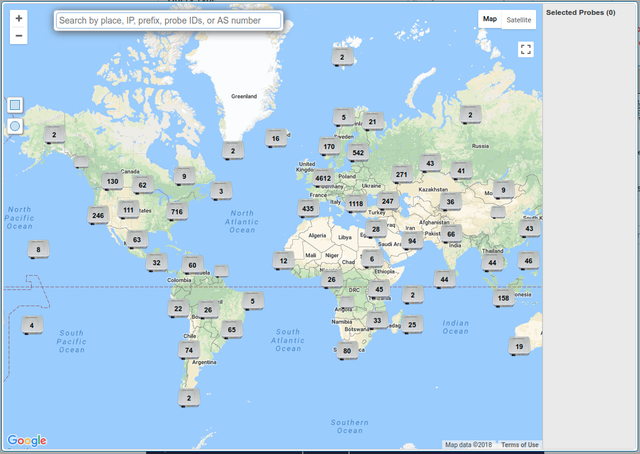

This. This is where the RIPE Atlas can come in super handy. I have the entire global network of RIPE Atlas probes at my disposal to poke at anycast servers to measure their performance and see how well their global deployment is performing.

The process consists of these major steps:

- Trawl the Internet for anycast DNS services and "Top [Number] DNS Servers You Should Use Instead of Whatever You're Using" articles listing popular DNS services (oddly, often in a very similar order to the other "Top [Number] DNS Servers" articles...) and collate all of these into a list of potential DNS networks to measure.

- Craft a DNS query which can be sent to each instance of an anycast server and its response time measured to give a good indication of how well the anycast network is performing.

- Roll this query out to 500 RIPE Atlas probes and spend some super exciting evenings analyzing the data to try and discover what each DNS network's current topology is, and if they'd seemingly benefit from a node in Fremont.

So that first step isn't too bad; that's just some Google-Fu and a willingness to really confuse ad networks with my sudden willingness to read click-bait articles.

The second step is where it starts to get interesting, because we need to somehow measure how fast the closest anycast node can answer a DNS query. There are DNS benchmarking tools which run through a few hundred of the most popular websites and try and resolve those to see how quickly the DNS resolver responds, but those measurements are a little problematic for what I'm looking for, because they don't only measure the network round-trip-time to the anycast node, but also how hot its cache is and how well connected it is to the actual authoritative DNS servers. If I was an end-user looking to pick the best DNS server, how quickly it can answer actual real DNS queries is important, but that's not really what I'm interested in. I want to know how quickly a random place on the Internet can get a query to this server, and after the DNS server has gone off and done all the work of resolving the query into an answer, how long it takes to get that answer back to the client. (In the case of authoritative DNS servers which don't provide open recursive resolution, these "top 500 websites" benchmarks are worthless, since the authoritative servers will only answer queries for zone files they're hosting themselves)

So really, I need a DNS query which the anycast servers can definitely answer themselves locally, without (maybe) having to send off queries to other DNS servers and walk the DNS hierarchy trying to find the answer. And hang up the rotary phone guys, cause most servers actually support a query that meets this criteria, and it even usually returns a TXT answer which identifies which specific instance of the anycast network you queried, which is much easier than running a traceroute from each probe to the DNS server and trying to analyze 500 traceroutes to figure out which end-node they happen to be getting routed to.

The id.server domain name (as documented in RFC4892) is a handy TXT record, which (for presumably very historical reasons) is a CHAOS class domain instead of an IN class domain (like every other DNS query you're used to) and tells you a unique name for each instance of a DNS server. By convention, global things like backbone routers and anycast DNS servers identify themselves by the nearest IATA three letter airport code, so knowing that, you can often be quite successful figuring out generally where a DNS server's id.server answer is coming from geographically.

A Specific Example

So as an example, let us study one of the anycast DNS resolvers out there; namely, UncensoredDNS, an open resolver based out of Denmark. They happen to have both a unicast node hosted in Denmark, as well as an anycast IP address (91.239.100.100, 2001:67c:28a4::) hosted from multiple locations.

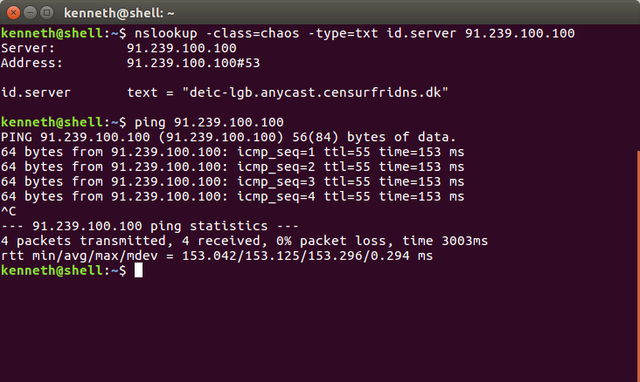

The first thing to do is confirm that this DNS admin has happened to implement the id.server query, so we can use a local tool like nslookup to query CHAOS: id.server to see if it even works.

Running: nslookup -class=chaos -type=txt id.server 91.239.100.100

Gives us an answer of: deic-lgb.anycast.censurfridns.dk

Excellent! So this tell us two things:

- They answer to id.server, so it's possible for us to run that query using the RIPE Atlas network to get a good perspective on where they are.

- They seemingly put something useful in their id.server string, namely perhaps a hosting network name and a city designator (although LGB is Long Island, and based on the 153ms ping time from Fremont, California, I doubt it's really in the same state as me). So maybe not entirely IATA city designators, but something unique at least...

So now we need to create a custom RIPE Atlas measurement to run this query from everywhere and see what comes back and how quickly.

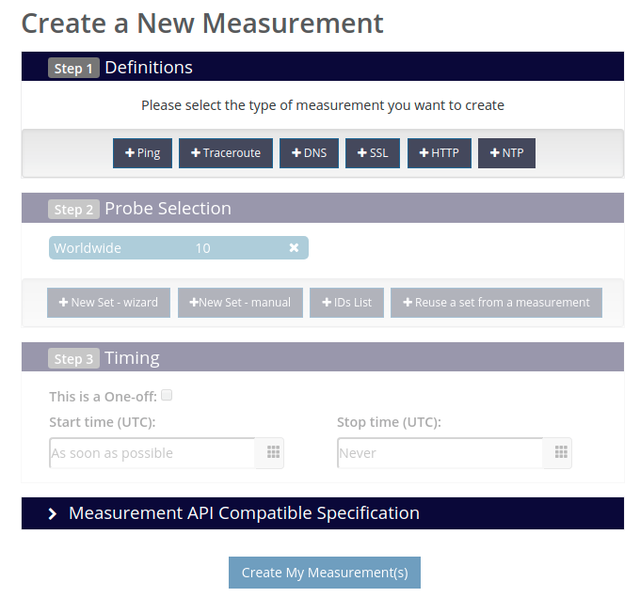

From the "My Atlas" dashboard, I select "Create a New Measurement". The measurement creation wizard has three parts:

- Measurement definition: This is where we specify to query

CHAOS: id.serveragainst the DNS server's IP address - Probe selection: This is where you decide how many probes you're willing to spend credits on to take this measurement. Since these measurements are relatively cheap, I max this out at the allowed 500 probes.

- Timing: Since I'm not trying to monitor these networks but just characterize them, I just make absolutely sure that I check the

This is a one-offbox so the measurement doesn't get re-run on a schedule.

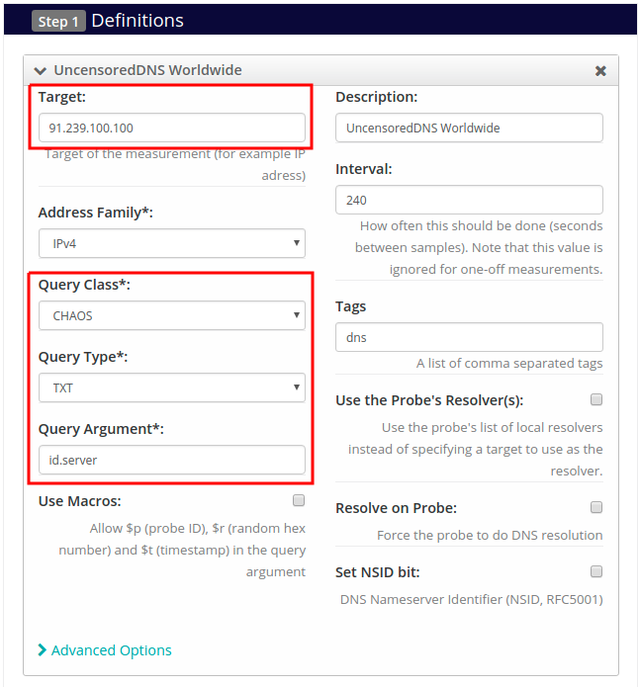

For the test definition, add a DNS measurement for each DNS server address you're interested in querying (I kind of wish there was a "clone this other measurement definition" button, but regardless you can create multiple measurements to run against the same probe set). When filling out the test definition, the important fields are to set the target to the DNS server of interest, change the query class to CHAOS, the query type to TXT, and the query argument to "id.server". I also give the test a meaningful description so I stand a chance of finding it later, and tagged it "dns" so my DNS measurements are all generally grouped together.

If you're scheduling a long-running measurement, the interval field would be important, but since I'll be checking the one time off measurement box in the timing options, this interval field will eventually disappear.

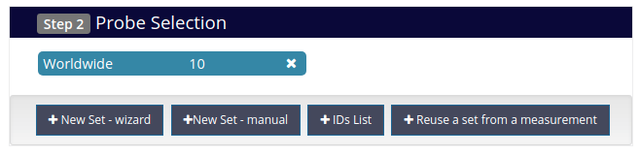

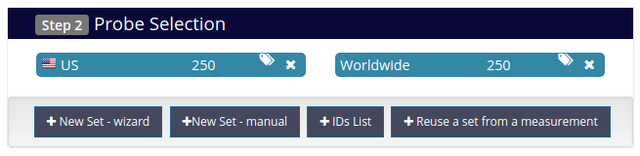

By default, measurements are run on ten probes randomly selected from anywhere in the world, but we want a larger test group than that, so press the X on the Worldwide 10 selection and + New Set - wizard to open the map to add more probes.

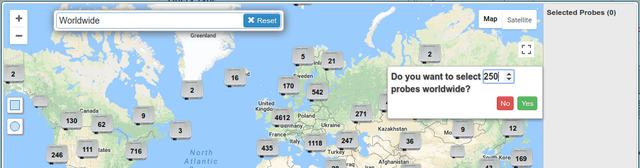

Ideally, you would be able to just type in Worldwide, select it, say you want 500 probes, and be done with it, but the problem is since the RIPE NCC is the European RIR, the concentration of probes in Europe is unusually high, so an unspecified "worldwide" selection tends to have the majority of its probes in Europe and a thin select everywhere else. Since I'm particularly interested in the behavior across the continental US, but also curious what each anycast server's behavior looks like worldwide, I've settled on a bit of a compromise of selecting 250 probes from worldwide and 250 probes from United States. This ensures a good density in the US where I need it, but still gives me a global perspective in the same measurement.

Type in worldwide, click on it in the auto-completion drop-down, and it should pop up a window on the right asking you how many probes you'd like to select. I choose 250, and press yes.

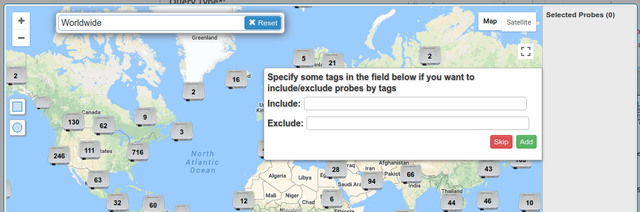

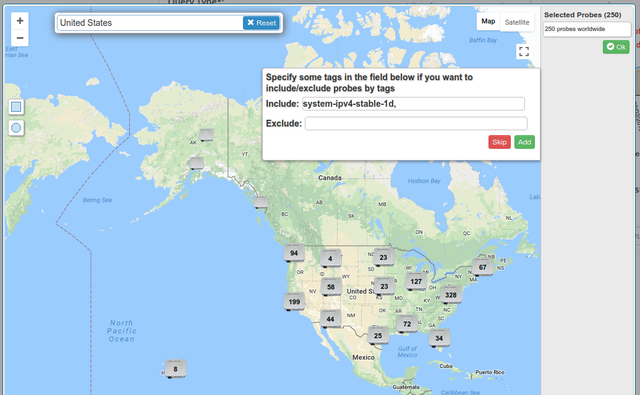

It will then ask you which tags you'd like to include or exclude when selecting probes. The system automatically tags RIPE Atlas probes with various categories like what hardware version they are, or how stable their IP addresses are, or if they're able to resolve AAAA records correctly, etc. I'm unsure if RIPE Atlas is smart enough to do this already, but particularly when measuring an IPv6 DNS server, I make sure to specify that probes tagged IPv6 Works or IPv6 Stable 1d should be used. For an IPv4 measurement, filtering on tags is probably not critical, but I usually select IPv4 Stable 1d just for good measure.

Click add and the 250 worldwide probes get added to the sidebar, and repeat the same process for the United States to get up to the maximum 500 probes. Once that's done, press OK and it returns to the measurement form.

The probe selection box should then be filled in with the 250 + 250 selection. In the Timing section, simply check the This is a one-off box, and the measurement(s) are ready to launch.

Press the big grey Create My Measurement(s) button!

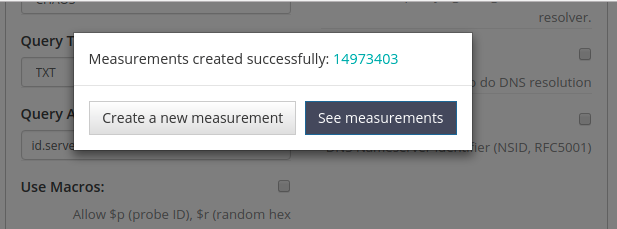

At this point, if all goes well, it should pop up with a window giving you a hyperlink to your measurement results. The results won't be immediately available, but for one-off DNS measurements it should only take a minute or two before most of the results start populating on the results page. Link to the results of this example measurement.

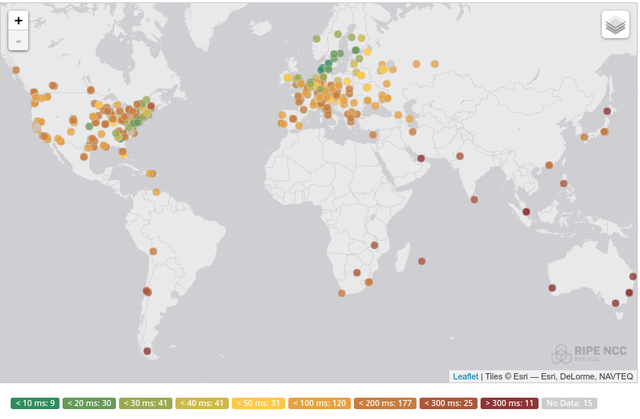

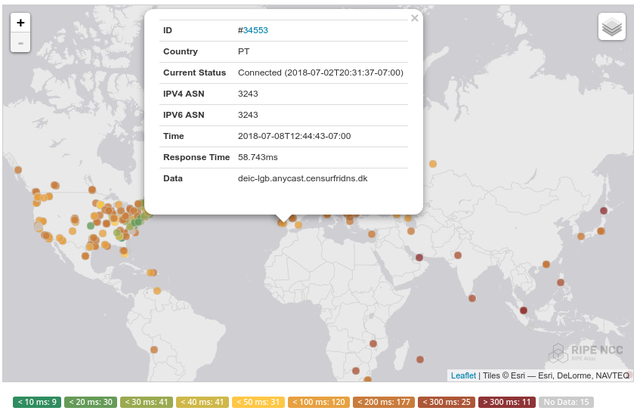

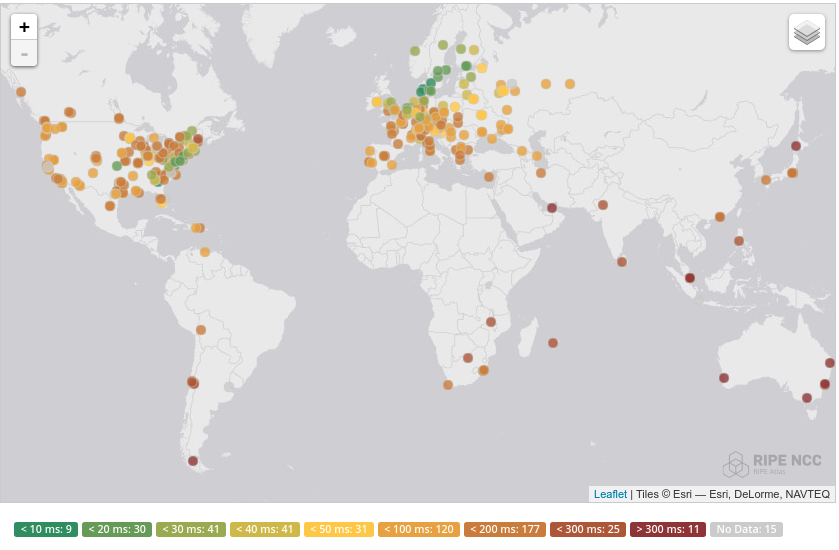

For the measurements I'm doing, the most useful results page is the map, where it color codes the results by latency, and you can then click on any single dot to see which probe that is and what result it got for the TXT query we requested.

Looking at the map, you can see some green hotspots in Europe, so his coverage in Europe seems to be pretty good, and there's a green gradient across the US east to west, so there's seemingly a node on the east coast. Clicking on any of the green east coast probes tells us that their TXT result was rgnet-iad.anycast.censurfridns.dk, where IAD is the IATA code for Washington DC, so chances are the east coast node is there.

Browsing around on the west coast, there's zero green measurements, and most of them are in the ~150ms range coming from servers located in Europe, so this result actually points to this being a reasonably good candidate of an anycast network to try and bring into our west coast exchange...

Comments 1

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Bjørn Mork •

It might be worth noting that the example service supports NSID too, and so does Atlas. You can check the "set nsid bit" and get the server name on ANY query: bjorn@canardo:~$ bjorn@miraculix:~$ dig +nsid ripe.net @91.239.100.100 ; <<>> DiG 9.11.4-P2-3-Debian <<>> +nsid ripe.net @91.239.100.100 ;; global options: +cmd ;; Got answer: ;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 51363 ;; flags: qr rd ra ad; QUERY: 1, ANSWER: 1, AUTHORITY: 7, ADDITIONAL: 12 ;; OPT PSEUDOSECTION: ; EDNS: version: 0, flags:; udp: 4096 ; NSID: 64 65 69 63 2d 6f 72 65 2e 61 6e 79 63 61 73 74 2e 63 65 6e 73 75 72 66 72 69 64 6e 73 2e 64 6b ("deic-ore.anycast.censurfridns.dk") ; COOKIE: a87fa541e222a27ea3cc09685bb231f3be543eae0fd34206 (good) ;; QUESTION SECTION: ;ripe.net. IN A ;; ANSWER SECTION: ripe.net. 21600 IN A 193.0.6.139 ;; AUTHORITY SECTION: ripe.net. 619 IN NS a2.verisigndns.com. ripe.net. 619 IN NS a3.verisigndns.com. ripe.net. 619 IN NS ns4.apnic.nET. ripe.net. 619 IN NS manus.authdns.rIPe.nET. ripe.net. 619 IN NS sns-pb.isc.org. ripe.net. 619 IN NS a1.verisigndns.com. ripe.net. 619 IN NS tinnie.arin.nET. ;; ADDITIONAL SECTION: a1.verisigndns.com. 2850 IN A 209.112.113.33 a2.verisigndns.com. 1752 IN A 209.112.114.33 a3.verisigndns.com. 2952 IN A 69.36.145.33 manus.authdns.ripe.net. 722 IN A 193.0.9.7 sns-pb.isc.org. 2754 IN A 192.5.4.1 tinnie.arin.net. 14668 IN A 199.212.0.53 a1.verisigndns.com. 2850 IN AAAA 2001:500:7967::2:33 a2.verisigndns.com. 1752 IN AAAA 2620:74:19::33 a3.verisigndns.com. 2952 IN AAAA 2001:502:cbe4::33 manus.authdns.ripe.net. 1370 IN AAAA 2001:67c:e0::7 tinnie.arin.net. 14668 IN AAAA 2001:500:13::c7d4:35 ;; Query time: 172 msec ;; SERVER: 91.239.100.100#53(91.239.100.100) ;; WHEN: Mon Oct 01 16:40:42 CEST 2018 ;; MSG SIZE rcvd: 559