Impact

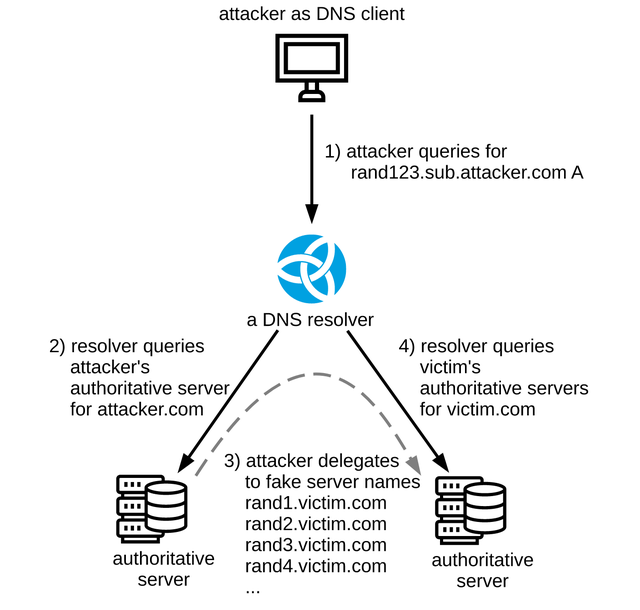

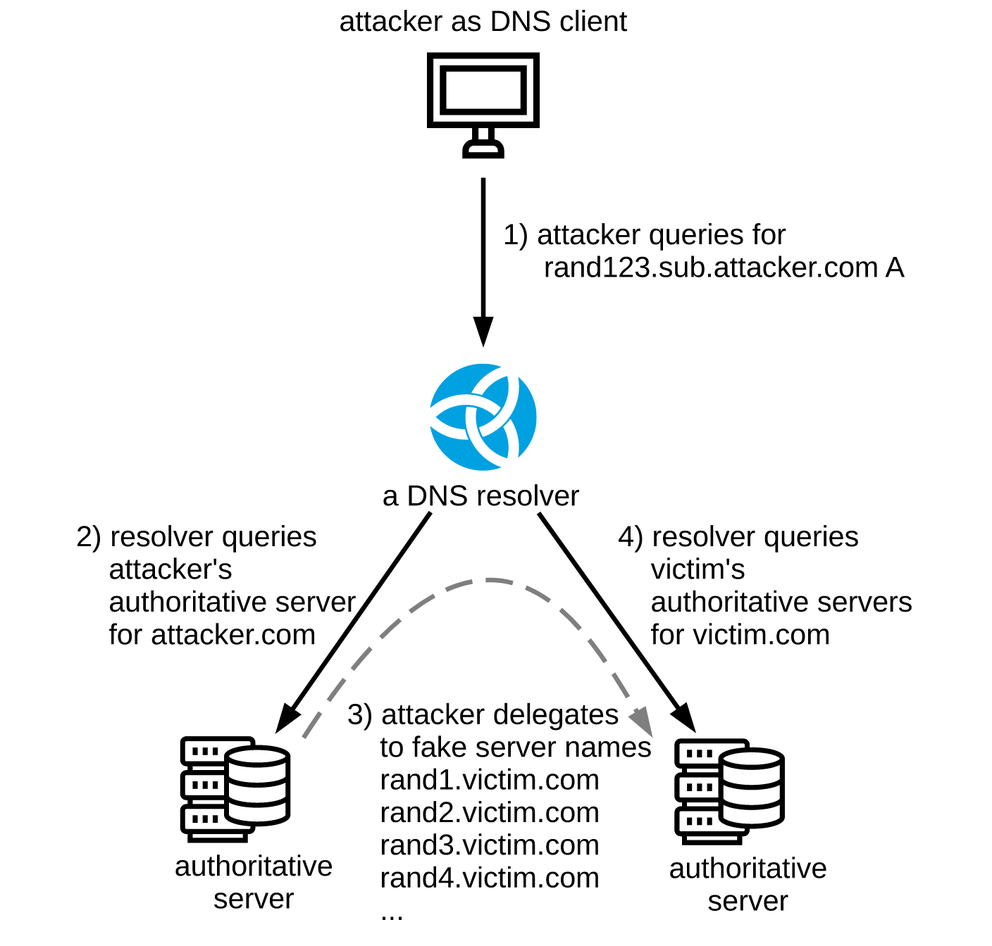

The main discovery in the NXNSAttack paper is that attackers are able to amplify a single DNS query towards a DNS resolver + single DNS answer with fake delegations (i.e. two packets) to fire multiple random queries at victim authoritative servers, effectively using a standard-compliant DNS resolver as an amplifier for random subdomain attacks. In practice, the packet amplification factor (PAF) very much depends on the strategy employed by the DNS resolver implementation used in the attack. For example:

- The BIND 9.12.3 resolver resolves IPv4 and IPv6 addresses for all NS names obtained from a delegation in parallel, leading to a packet amplification factor of 1000x.

- The Knot Resolver 5.1.0 resolves NS names one at a time and places other limits on number of resolution steps generated by a single client query, limiting PAF to the order of tens. In fact, half of the packet amplification factor 48x is caused by workarounds for non-compliant authoritative servers. Without workarounds for RFC 8020 and RFC 7816 non-compliance, the PAF of the Knot Resolver would only be 24x (yet another example that workarounds are bad, but that’s another story.)

None of these strategies are inherently wrong, they just represent different trade-offs between resources invested into a single client query vs. processing multiple client queries in parallel.

In the end, spare capacity on the resolver and authoritative servers determines which party will be the “victim” of NXNSAttack because one of them gets overloaded first. As long as the capacity is sufficient, servers will continue to operate just fine, possibly making one of the parties virtually unaffected and, in absence of appropriate monitoring, oblivious to the attack.

Unfortunately, NXNSAttack abuses a basic principle of the DNS protocol, which practically means there is no fix, only mitigation. Luckily researchers followed the responsible disclosure protocol and allowed vendors to implement and release mitigation before making the attack public.

NXNSAttack is a special case of the well-known random subdomain attack, so mitigation approaches fall into two categories: Specific for NXNSAttack and generic for random subdomain attacks.

NXNSAttack mitigation

Unlike traditional random subdomain attacks, in the case of NXNSAttack queries are generated by the resolver itself. This difference allows vendors to implement simple mitigation techniques like limiting the number of names resolved when processing a single delegation, etc.

An obvious advantage is that it is simple, at least in theory.

A disadvantage of mitigation based on counters is that it requires vendors to invent arbitrary limits not based in the DNS protocol specification, basically determining the maximum packet amplification factor. At the same time, these arbitrary limits might break resolution for some domains because they put additional limits on the resolution process.

This is a very practical problem because recently-published research estimates that 4% of second-level domains (e.g. example.com.) have a problem in their delegation from the top-level (e.g. com.), so any change which adds arbitrary limits to retries during the resolution process has to be weighted very carefully.

In the upcoming days we will see how successful vendors were in determining their magic numbers and whether they get away without breaking any major domains.

Generic Random Subdomain Attack mitigation

Any random subdomain attack, NXNSAttack included, generates random query names to bypass DNS caches. It follows that generic mitigation has to prevent attackers from bypassing the cache – and luckily we already have technology to do that!

Aggressive Use of DNSSEC-Validated Cache (RFC 8198) uses DNSSEC “metadata” in form of NSEC(3) and RRSIG records to generate negative answers without the need to contact authoritative servers. How does this work? First let’s have a look at example NSEC records:

$ kdig +dnssec @l.root-servers.net example. ;; ->>HEADER<<- opcode: QUERY; status: NXDOMAIN; id: 55933 ;; Flags: qr aa rd; QUERY: 1; ANSWER: 0; AUTHORITY: 6; ADDITIONAL: 1 ;; EDNS PSEUDOSECTION: ;; Version: 0; flags: do; UDP size: 4096 B; ext-rcode: NOERROR ;; QUESTION SECTION: ;; example. IN A ;; AUTHORITY SECTION: events. 86400 IN NSEC exchange. NS DS RRSIG NSEC events. 86400 IN RRSIG NSEC 8 1 86400 20200531170000 20200518160000 48903 . bWcSkQHURJGO... . 86400 IN NSEC aaa. NS SOA RRSIG NSEC DNSKEY . 86400 IN RRSIG NSEC 8 0 86400 20200531170000 20200518160000 48903 . Ru23msHh23... . 86400 IN SOA a.root-servers.net. nstld.verisign-grs.com. 2020051801 1800 900 604800 86400 . 86400 IN RRSIG SOA 8 0 86400 20200531170000 20200518160000 48903 . pIolh2KxjZbgtwuePLA4...

We sent the DNS query example. A to one of the root DNS servers, and it answered back with NXDOMAIN answer, indicating the name does not exist. At the same time, we received two proofs-of-nonexistence in form of NSEC records (and their DNSSEC signatures in RRSIG records).

The first NSEC record events. 86400 IN NSEC exchange. NS DS RRSIG NSEC means that the root zone contains domain events. with record types NS DS RRSIG NSEC, and more importantly, there are no domains in between names events. and exchange.

The second NSEC record . 86400 IN NSEC aaa. NS SOA RRSIG NSEC DNSKEY means that the root zone contains DNS root . (surprise!) with record types NS SOA RRSIG NSEC DNSKEY, and also that there are no domains in between names . and aaa.. This proves there is no wildcard record *. and thus NXDOMAIN is really the correct answer to query example. A.

Each of the records has time-to-live specified as 86,400 seconds. This allows resolvers to synthesise NXDOMAIN answers for any queries falling into indicated ranges (. – aaa., events. – exchange.) for one full day, effectively cutting traffic towards authoritative servers.

As a consequence, the querying DNS zone which contains N names at random will populate the resolver’s cache in roughly O(N) answers. In other words, the cost of eliminating random subdomain attacks between DNSSEC-validating resolvers and authoritative servers for the duration of TTL is linear with the number of names in the target DNS zone. It works surprisingly well even for large zones with one million domains in them – pretty charts about this setup can be found in my older presentation (from 2018).

What next?

First of all, upgrade your DNS resolvers to get at least some NXNSAttack mitigation.

Once the dust settles, please consider deploying DNSSEC on authoritative servers, and also on DNS resolvers.

Aggressive Use of DNSSEC-Validated Cache limits the impact of random subdomain attacks. It is already implemented in the Knot Resolver. Unbound also has partial support (NSEC only) and BIND has a prototype as well. If your DNS resolver vendor does not offer it at the moment, ask for the feature and stop random subdomain attacks once and for all!

If you are not used to speaking to your DNS software vendor, please fill in this cross-vendor survey.

This article has been adapted from the original post which appeared on the CZ.NIC Blog.

Comments 4

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Geoff Huston •

This is not a "newly discovered vulnerability." Florian Maury on ANSSI identified a potential query amplification issue with glueless delegations back in 2015 and presented it to the DNS OARC 21 meeting in May of that year. His presentation can be found at https://indico.dns-oarc.net/event/21/contributions/301/attachments/272/492/slides.pdf. The presentation notes that patches for Bind, Unbound and PowerDNS recursive resolvers were released back in December 2014, while the generic advice for resolver implementations to limit the amount of work performed to respond to a query was contained in RFC 1034, published in 1987. So credit where credit is due -- we should acknowledge Florian Maury for his work on this over five years ago, and also acknowledge Paul Mockapetris for alerting DNS resolver implementers to the possibility of encountering unbounded work flows back in 1987 when he wrote RFC1034!

Florian Maury •

Hi, Thank you, Geoff, for your kind words and for acknowledging my work and Paul's. This is very much appreciated! In truth, I first thought this was basically the same attack that I published and I have to admit I was somewhat pissed about it. But the authors of the NXNSAttack mention mine in their paper and explain how they differ, and I believe their analysis is fair. At any rate, even if it was the same attack, rediscovering an attack that is still applicable is still a valid finding. I did something quite similar with the attack leveraging RRL to make Kaminsky's attack still somewhat practical again, in 2013, and I don't think I was stealing Dan's work either. Thus, I would like to thank the authors of the NXNSAttack for their original research, and for citing my work too.

petr_spacek •

There are certainly similarities and authors have acknowledged previous work by Florian Maury in the NXNSAttack paper. Allow me to quote the NXNSAttack paper https://cyber-security-group.cs.tau.ac.il/dns-ns-paper.pdf here: Maury [18] presents a different attack that also ex- ploits the delegations of name-servers in a referral re- sponse. However, the attack (called iDNS attack) PAF is at most 10x. In iDNS the attacker’s name-server sends self-delegations (back and forth to the attacker’s name- server) up to an infinite depth. A major difference from our work is that the glueless name-servers in the iDNS attack are never used against an external server such as a victim name-server. Some measures have been taken by different DNS vendors such as BIND and UNBOUND following the disclosure of iDNS described in [18], how- ever these measures do not affect and do not weaken the NXNSAttack. Unbounded work in any implementation is surely a bad idea and Paul Mockapetris was surely right, there are no doubts about this. Having said that I do not agree that NXNSAttack can be dismissed as nothing new. Researchers found an exploitable flaw in several DNS resolver implementations, and several vendors released software with mitigation for NXNSAttack, so it is not just theoretical problem, and surely not the same as in 2015 because mitigations introduced back then (see CVE-2014-8500, CVE-2014-8601, CVE-2014-8602) did not save us in 2020. On more generic note, attempting to categorize all "unbounded work problems" as "the same flaw" is equivalent to declaring all these flaws equivalent to halting problem from computability theory - that is technically correct but really not helpful for anyone except for computability theory researchers. This view is reinforced by fact that MITRE CVE classification has special categories for variants of this problem (CWE-405, CWE-406, CWE-1050 are first three I found right now). That very strongly suggests security community cares enough to distinguish individual "insufficiently bounded work" problems no matter what protocol or software it affects. To conclude: No matter if you consider this novel attack or not please upgrade if your software is affected.

Stéphane Bortzmeyer •

Regarding Geoff Huston's comment, and after discussion with Florian Maury, and with his authorization, I translate here his analysis : "The attack [NXNSattack] is quite different, and it has a significant impact. It was not detected at the time of iDNS. Moreover, the article [about NXNSattack] is well written, mentions the related work and explains the differences. To summarize, this is an new and serious contribution."