Usability testing helps us to understand how RIPE NCC members interact with our online platforms and helps us to identify possible improvements. In this article, we will take you through our usability testing process.

What people say, what people do, and what they say they do are entirely different things. - Margaret Mead

This quote forms the basis of our work in usability research. How can we be sure that our users will use a service or feature properly? How can we know what features are needed and which are not? Without testing - we can't. This is why we conduct interviews, surveys, and usability testing on our services.

During one of our usability testing sessions, we found that the Addressee field in our application forms was being misunderstood by users. Instead of a contact person's name, participants were adding the same or a secondary address into this field. This illustrates the kind of insights that usability testing can provide.

How we collect user feedback

We use three feedback methods: surveys, interviews and usability testing. These are complementary and help us to build a clearer picture of our users’ needs and expectations.

Usability survey

Surveys are useful when we want to get quick feedback on a specific service. For example, we recently surveyed RIPEstat users to get a better understanding of who uses RIPEstat, what was their favourite features and which feature would be useful to have in the future.

User interviews

Users interviews give us the most qualitative data, as we get first-hand feedback from our members on our services. We usually interview people at RIPE Meetings between sessions or during Member Lunches.

Usability testing

Unlike the other testing methods, user testing is a mix between qualitative and quantitative analysis. We recruit volunteers and asking them to perform precise tasks that are recorded. This allows us to directly analyse how a user interacts with a feature or service and measure their perceptions.

Question what matters

Every year, we take a look at our usability roadmap to determine the services that need to be tested. As part of this process, we invite colleagues from different departments to discuss the tasks to test and write them down based on discoverability, findability, usability, and usefulness. When this is done we shortlist and finalise the tasks.

Choosing a testing location

For choosing the testing location, we pair up with our Learning and Development team. They help us to find the right location as we often combine our on-site user testing with face-to-face training courses.

When choosing the testing location, we look at:

- Where the highest traffic is coming for the specific services we want to test

- If we already covered this country or region in the past during a Member Lunch or other meetings

- If the geopolitical and logistical conditions allow us to organise usability testing sessions

With that in mind, we were able to successfully organise usability testing session in Dublin (2016), Skopje (2017), Kyiv (2018), and Bucharest (2019). As we already receive a lot of feedback from our users in Western Europe, we're trying to prioritise other parts of our service region when organising our sessions.

Recruiting participants

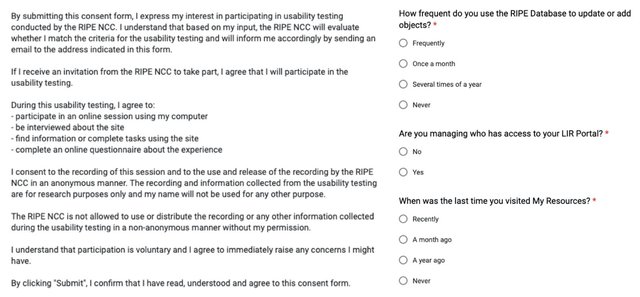

Recruiting participants is not an easy task, as they have to fit specific criteria and be willing to spend time to do the testing. But with the help of the Learning and Development team who regularly deliver face-to-face (110 courses in 2019), we can easily approach members and ask them to participate in usability testing. If they accept, we send them an invitation with a consent form to fill in.

Snapshot from the usability testing consent form.

"Testing with 5 users uncovers 85% of the issues" - industry standard

As a good practice, we recruit around ten participants who fit our criteria for each session. Depending on the training course and the location, we are usually testing five users per day after at the end of the course.

Data analysis and reporting

After gathering feedback during the testing session, we analyse all recordings and eliminate possible outliers. This could be people who took too long or were too quick to accomplish tasks compared with the average.

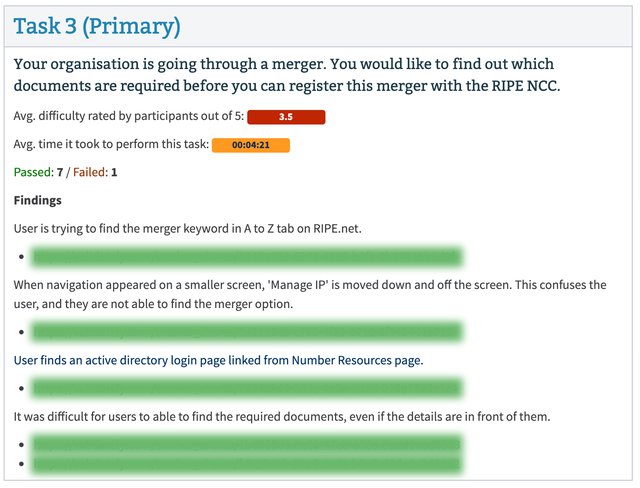

We then compile the data according to the following criteria:

- Average difficulty grade

- Average time to the task

- Number of passed and failed participants

- Findings with the recording evidence

- Suggestions for improvement

Compiled data of recordings and findings. Links are blurred for data privacy.

Improvements based on data

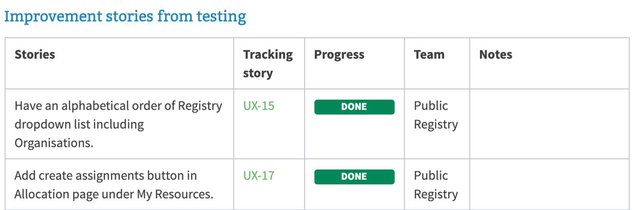

All of the data is analysed, published on our Intranet and made available for our colleagues internally. We then discuss possible solutions and outcomes with the product owners which are finally translated into stories for Scrum teams to implement.

Once we finish implementing all of the improvement stories that came out of the usability testing sessions, we send a note to everyone who participated, to thank them for their help and notify them of the changes we made as a result.

A snapshot of improvement stories.

Conclusion

We are constantly looking for ways to make our services better and usability testing is one way to do it. If you are using one of our services, we invite you to give us feedback by participating in our user testing sessions or by directly contacting us via our website. As we are not planning to hold face-to-face session anytime soon due to COVID-19, we are now conducting all of our user testing sessions virtually.

If you are interested in user experience research, keep an eye on RIPE Labs as we will be publishing usability case studies in the coming months.

Image credits:

- Feature icon by monikk

- Stakeholder icon by DesignBite

- Tasks icon by Larea

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.