Last Thursday, 1 June, RIPEstat suffered from the longest service outage in years. Although the root cause was, in hindsight, trivial, it took more than three hours to fully recover. As unfortunate as this outage was, the situation yielded vital take-aways that will hopefully help prevent future outages.

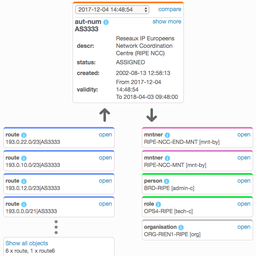

The place at the front of the line is not always the easiest to occupy. This certainly holds true for RIPEstat, as the interface to a plethora of different data sources. With more than 20 different backends to connect to, and more than 30 million requests per day, RIPEstat is the RIPE NCC’s workhorse when it comes to information delivery.

From a probabilistic outlook, we can expect that every additional backend and every increase in the volume of requests increases the chances of failure and a break in whatever link in the chain happens to be weakest. And indeed, after a long period of smooth operation, we experienced - somewhat against all odds - two outages in the past seven days. The first one was last Thursday, 1 June, between 13:04 and 16:52 UTC. The second one was yesterday, 7 June, between 03:30 and 06:40 UTC.

In both cases, the outages were primarily caused by backend related issues. In the first case, recent changes made to a backend triggered a bug in the interface logic. In the second, the problem was related to a backend being forced into a limbo state, most probably caused by load (but we are still investigating).

As bad as these outages were, I see it as an opportunity to grow and learn, with the goal to build an information system that satisfies the data needs of our community in a trusted and reliable manner.

In the aftermath, we can say that there were things that did and did not work very well. For the things that did not work well, we are working to improve them as soon as possible.

Among the immediate and most visible changes for users were:

- Disabling bypassing of the result cache (see here)

This was enforced after we discovered that some users misused this feature and applied it to basically every API request. Given that we serve 30% of our results from cache, this can pose a threat to the stability of the system if suddenly used by many users. - Blocking users creating high load

As a first measure to stabilise the system and make it accessible to everyone, we blocked some users who were creating heavy load. All of these blocks were lifted as soon as we could guarantee safe operation.

Some of the other issues that we discovered require long-term changes. These will include:

- Improving monitoring to ensure we are alerted before users are affected

- Improving on emergency protocols

- Separating management of data calls from their execution

- Isolation of system responsibilities

- Conducting regular disaster drills

Aside from the points we will improve on in the near future, I’d like to mention a point that worked out exceptionally well. The “sourceapp” parameter - a unique identifier per user - allowed us to inform users about any immediate changes we applied to the system. That said, I’d like to encourage all regular users who have not done so already to share a contact email address with us and use this on every data call (again, see more information on using data calls).

Last but not least, I would like to thank everyone who was involved in the recovery process:

- Our Information Technology department

- Our Global Information Infrastructure department

- Iñigo Ortiz de Urbina for providing me insight into 10GB of log data

- My colleagues at Research and Development

Special thanks goes to Michael Sonntag and Rudolf Hörmanseder, both from the Institute of Networks and Security at the Johannes-Kepler University in Linz, for providing me with Internet access when my meeting with representatives of the university was interrupted by the first outage.

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.