We’ve been brainstorming on a process to determine the criticality level of our services, and we’d now like to ask the community for input on how we should approach this effort moving forward.

Over the past few months, we’ve been working on a cloud strategy framework. The final version was published in October, but there was one piece still outstanding - a methodology for determining the criticality level for our services. We would now like to hear what you think about how we are approaching this. We appreciate that some of the ideas presented here may seem a little complicated. If parts of the article are unclear, please let us know by dropping a comment below or asking us at the RIPE 83 Meeting this week.

Our Plans

For some time, we’ve been looking at different standards and brainstorming with our internal teams, and we’d now like to share a proposal to get this discussion started. The process we have in mind will follow these steps:

- Publish this first proposed straw man article on the draft criticality level framework

- Gather the community’s feedback on the article and the model

- Apply the feedback, publish a new draft and include a list of our services classified accordingly to the framework, and ask for further comments and suggestions

- Integrate the outcome as a complement to the Cloud Strategy Framework

To determine the criticality level of our existing services, we’ll start with this proposed model framework and work with the relevant service owners and Working Groups to ensure we are capturing all elements and criteria. We deliberately haven’t offered an example of how this framework applies to one of our existing services - e.g. the RIPE Database - as this is only a proposed model at this stage. We need to have more discussions and make adjustments based on the feedback we receive before we move ahead and start properly categorising our services in this criticality framework.

The Idea

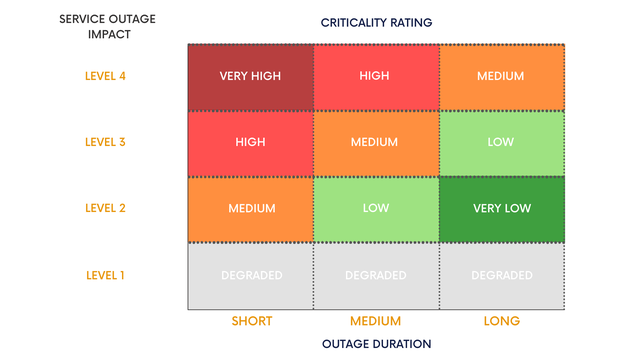

We want to start by describing the basic idea. Each service has two criticality levels, depending on the effect that an outage has on internal (RIPE NCC) and external (the Global Internet) impact areas. These are measured on a six-point scale from ‘Degraded’ to ‘Very High’.

Intuitively, the longer an outage persists, the higher its impact. This also depends on what is affected. For example, if a service goes down for only a little while but this causes a significant impact to the regular operation of a specific area (whether external or internal), then it should be assigned a higher criticality level.

Before we continue any further, let’s explain what we mean by ‘impact areas’ and define the impact levels of outages within these areas.

Determining the Impact Areas

External Impact Areas

The Internet Society’s Internet Impact Assessment Toolkit identifies the critical properties of the Internet. We used this to help us define the external impact areas of our services. These areas capture the impact that any service outage will have on the global operation of the Internet (e.g. DNS or Routing). Based on the toolkit, our services relate to the following domains:

- The Single Distributed Routing System

- Common Global Identifiers (IP addresses and the Internet’s Domain Name System (DNS)

A service disruption can impact each domain differently, for example, global vs local routing disruption. We categorised the level of impact from 1-4 (L1 being the lowest and L4 the highest), and we summarised them in the table below for each area. Estimating the effect a service outage has on these three external impact areas would yield the external criticality level.

| Impact area | Impact Level 4 | Impact Level 3 | Impact Level 2 | Impact Level 1 |

|---|---|---|---|---|

| Routing | Global Internet routing disruption | Regional routing disruption | Local routing disruption | Degraded routing performance |

| IP addresses | Global disruption | Regional disruption | Local disruption | Degraded / read-only |

| DNS | Global DNS disruption | Regional DNS disruption | Local DNS disruption | Degraded / slow |

Internal Impact Areas

We consider these areas as critical within our organisation and we used ISO/IEC 27005:2011 and our internal risk assessment to define them. The areas range from financial challenges to deterioration of service quality, legal concerns, and so on. Similarly to our approach on the external impact areas, the effect an outage may have on these internal impact areas will give the internal criticality level. For these internal impact areas, we will also categorise the levels of impact from 1-4.

Determining the Service Criticality Level

We’ve used industry-standard “classes of nines” to define the various service outage types:

- Short outage: maximum outage duration of approximately 15 minutes per quarter (equal to minimum 99,99% availability).

- Medium outage: maximum outage duration of about 2 hours per quarter (equal to minimum 99,9% availability).

- Long outage: maximum outage duration of 22 hours per quarter (equal to minimum 99% availability).

Based on all the parameters mentioned earlier, we’ll follow this process to assign two criticality levels to each one of our services (one will be related to the impact of a service outage on the external areas and the other on the internal ones):

- Determine the impact areas

- Classify the duration of service outages

- Define the impact levels for each impact area, and

- Propose the criteria for determining the criticality level of any service.

The heat map below is an example of the process we recommend to assign the criticality level to each RIPE NCC service.

The criticality level of a service, among other parameters, will also help us define the service architecture based on the strictness level for each service, as specified in the cloud strategy framework. For more information, please refer to Table 2: “Strictness According to Criticality” of our recent RIPE Labs article.

We Need Your Input

These are the plans that we have in mind, and we’d like to hear your opinions on the model and any proposed changes you may have. Please leave your comments below or join the RIPE NCC Services WG session at RIPE 83 where we’ll be presenting this proposal.

We’ll soon share another update based on the feedback we have gathered from the community, our internal stakeholders and service owners, and the relevant Working Groups for each service.

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.