The pilot we ran to assess the feasibility of involving virtual machines in the pool of RIPE Atlas anchors is complete and the results are good.

The pilot showed, first and foremost, that RIPE Atlas anchor VMs work as well as we’d hoped. In fact, based on all the data we've been able to analyse so far, there's no discernible difference in the performance of anchor VMs and their hardware counterparts.

Comparison on this front has been made possible through the participation of Digital Ocean, who were able to set up an anchor VM in close proximity to a physical anchor they connected some time ago. For a full analysis of differences in performance between the two machines, look out for a follow-up article in the coming week.

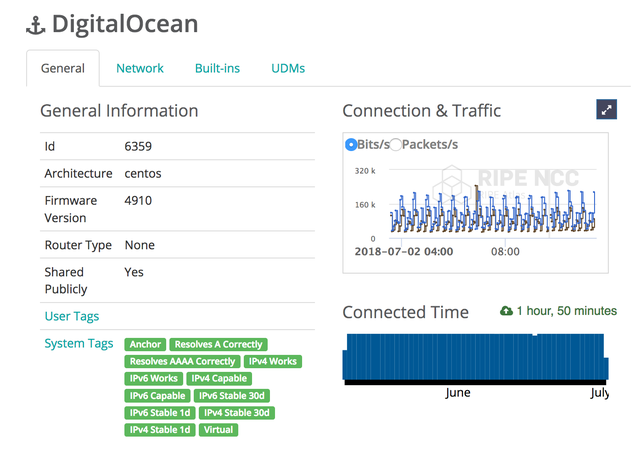

Figure 1: Overview of RIPE Atlas anchor at DigitalOcean

All in all, this is a successful outcome, and one that gives us every reason to move on to the production phase for RIPE Atlas anchor VMs. With that in mind, we'd like to start out by thanking everyone who helped along the way, especially those who volunteered to take part in the pilot itself.

Now, with a number of questions still open on how best to move forward, we thought we’d go through some of the possibilities and invite the community to lend their input.

Approaching Installation

The pilot allowed us to get a feel for some of the logistical and technical challenges that are apt to crop up in the process of anchor VM installation. Based on what we encountered there, here are the possibilities we're entertaining:

- Option 1: We make our requirements known to the host and the host delivers a VM that meets those requirements. This would let us pursue the VM option without committing more resources than those already allocated to the deployment and maintenance of physical anchors.

- Option 2: We set up VMs ourselves in locations where the presence of virtual anchors would bring added benefits to RIPE Atlas. This would, of course, require extra resources on our side. But having virtual anchors hosted by, say, big cloud providers could justify this.

In the context of the pilot, we dealt mostly with 'option one' cases, none of which raised any serious difficulties. We did face some challenges during installation of the Amazon VM - some of which were reported in the previous update, some of which occurred since then - but every hurdle has helped us get a better handle on how to move forward.

The options listed are not, of course, mutually exclusive. At present, our plan is to move on to the production phase with the first, resource-light, option. In doing so, we would be keen to work with big providers who are in a position to offer VMs that meet our requirements right out of the box. We're also very much willing to listen to anyone out there in the community who might want to make a case for some particular instance of option two.

As always, if you have comments or suggestions regarding potential pros or cons for the proposed scheme, we'd like to hear them.

Installation Dos and Don'ts

During the course of the pilot, we got a good chance to get clear on what we do and don't want to see happening as we go into production with the anchor VMs. Here are some of the main points.

Location

As with any RIPE Atlas probe, there are restrictions on where physical anchors can be connected. For instance, if a host plans on connecting a probe to a network that is not theirs, they have to obtain clear prior permission for the connection and use of said probe in that network (see the terms and conditions).

Obviously, given the inherent differences between virtual and physical anchors, we need to consider our stance on where we allow anchor VMs to be set up. What if company A want to set up an anchor VM in network X? Should this be allowed? Should we make them ask permission? Or should we restrict hosts to setting up anchors only in their own networks? Also obviously, there are a number of legal implications here that we'll continue to investigate before we make any decisions.

Quantity

It will very likely be a good idea to restrict the number of anchor VMs that can actually be added. After all, each anchor brings additional costs, due to storage and processing requirements. So we won't necessarily want to add as many anchor VMs as are offered to us. This will be made clear to potential hosts in a disclaimer stating that we will reserve our right to decide on how many anchors can be set up.

Also, as with physical anchors, we'll also be keeping an eye on the number of anchors added per year, per host/provider. This will help mitigate all sorts of risks. For instance, in the extreme case, if we were to let people install as many VMs as they like, some parties might exploit this to run up a high number of RIPE Atlas credits. What's more, making it possible to flood the system with large numbers of anchors would also expose us to certain operational risks.

Ultimately, we will define and apply limits if and when we see that overuse of the service would not serve the benefits of the community or the measurement ecosystem.

Future Risk

We do want anchor VMs to stay connected after installation. One potential risk we anticipate is that, since hosts won't be required to invest money on hardware, there may be less commitment to maintaining virtual anchors. If this were the case, anchors would be more likely to go offline out of the blue, which would significantly detract from the stability of the anchor network.

To mitigate such unwanted effects, our intention is to monitor anchor VMs closely and to report back to the community if we see too many hosts coming and going. Of course, as per the current RIPE Atlas anchor MoU, whether they be physical or virtual, the continued upkeep of RIPE Atlas anchors is dependent first and foremost on the efforts of hosts.

Next Steps

We want your input. The pilot went well, and we're all set to move to production, but before we go there, we want to hear what you think about the issues and proposals outlined here. For this reason, we're giving the community a month to share their thoughts before we take any further steps.

Let us know whether, for example, you object to the RIPE NCC committing extra resources to installing anchor VMs in certain beneficial locations. If you don't, maybe let us know where exactly you'd like to see those anchors. We'd also like to hear feedback on how we should go about restricting where and how many virtual anchors get set up. We invite all input, both general or specific.

If you do have something you'd like to add, please contact us between now and the end of July, either in the comments section below, or at atlas@ripe.net.

----------

Addendum (added 5 July)

Requirements for RIPE Atlas anchor VMs:

Network-wise, the anchor VMs:

- must have public IPv4 and IPv6

- must have native IPv4 and IPv6

- require static IPv4 and IPv6 addresses need to be unfiltered (not firewalled)

- require up to 10 Mbit bandwidth (it currently requires much less)

Hardware-wise, the anchor VMs:

- need 50GB of storage

- need 2G of RAM

- need 2 vCPUs

- need 1 virtual NIC

OS-wise, the anchor VMs:

- need to have a minimal centos7 installation

- need to have the VM-anchor bootstrapping pubkey added to the root account's authorized_keys

- need to the NIC to be presented as 'eth0'

- need the storage to be presented as a single block device

- need the following partition layout:

- partition 1: 256MB for /boot, ext4

- partition 2: remaining space, LVM Physical Volume

- 4GB for the / logical volume, ext4

- 20GB for the /var logical volume, ext4

- 4GB for the /tmp logical volume, ext4

- 20GB for the /home logical volume, ext4

- 2GB for swap logical volume

Comments 6

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.

Daniel Karrenberg •

Thank you for this report. I have a couple of questions: Have you tested the impact of VM performance on measurements? In particular have you implemented performance reporting that would allow us to detect if measurements are impacted by VM performance problems? Are there draft requirements for anchor VMs and do they consider VM performance? As you mention scaling the back-end and support infrastructure may become a problem if the investment of the host becomes very low. Have you considered asking the hosts of VM anchors to contribute to these costs? Daniel

Michela Galante •

Hi Daniel, I’ll respond to each of your questions in turn. No, we haven't tested the impact of VM performance on measurements and have no plans to do any performance reporting. However, if more people are interested in it we might include this in our planning. What we did do was compare the measurement results between a physical and virtual anchor both hosted in the same location. We didn’t see any significant differences. You can read more in this article on RIPE Labs: https://labs.ripe.net/Members/stephen_strowes/comparing-virtual-and-metal-ripe-atlas-anchors There are draft requirements that were given to the volunteers in the pilot. As it might be interesting for everyone to see these, we have added them to the end of this article as an addendum. As you can see, they do not consider VM performance but they are based on the requirements of the hardware anchors. Yes, we are talking about possible contributions. It is not new to ask contributions to RIPE Atlas users when they become more involved in the usage of our system (but this is independent from the anchor being a VM or the Hardware version). Thank you for your questions!

Richard Havern •

GEANT has two long term VM environments available, Frankfurt and Paris, we would happily host an anchor in either or both locations in addition to the physical anchor in London.

Jared Mauch •

The steps I used to create my anchor went roughly like this: virt-install --name us-chi-as2914.anchors.atlas.ripe.net --ram 2048 --disk path=/ssd/us-chi-as2914.anchors.atlas.ripe.net.qcow2,size=50 --vcpus 2 --os-type linux --graphics none --network type=direct,source=p4p1,source_mode=bridge,model=virtio --console pty,target_type=serial -x 'console=ttyS0 noverifyssl ks=https://x.x.x.x/ks/anchor.ks ksdevice=eth0 ip=a.b.c.d netmask=255.255.255.192 gateway=a.b.c.d' --location CentOS-7-x86_64-Minimal-1708.iso I don't know to what extent the anchor.ks file is private that allows Ansible to take over the VM, but this did work well for getting the VM working.

Kristian Klausen •

That is a rather powerful VM (the TL-MR3020 probes has 32MB ram and a Atheros AR9331@400MHz). Do the VM need to be so powerful? I would love to participate with a probe, if I could run a probe on my (weak) Intel Atom server, either as a VM or in some sort of container (systemd-nspawn/docker?). I will be more than happy to experiment if I could get access to the anchor.ks file (fell free to mail me at firstname@lastname.dk)

Robert Kisteleki •

There's need for more resources for an anchor than for a probe. It has services on it, for example. About probe VMs: we're looking into the possibility to introduce "software probes" deployable as a software package or such. We'll report back on this activity when we have more information.