Here are the first results of our DNS transfer size measurements. We are interested to determine any possible problems for users of K-root once it starts giving DNSSEC responses to resolvers that request them. DNSSEC responses are significantly larger than current responses. We are interested to learn if these larger responses would reach the resolvers.

Introduction

The original DNS protocols were designed to limit the size of UDP packets to fit in the IP minimum MTU of 576 bytes. Later the protocols were extended to allow the client, typically a resolver, to signal its willingness to re-assemble fragmented UDP packets up to a certain size; this extension is commonly called EDNS.

With the advent of DNSSEC, clients can signal to the server that they desire responses with DNSSEC information via the DO-bit. Responses with DNSSEC information typically do not fit within a 576 byte IP packet. Without a sufficient buffer signalled via EDNS, the server will have to omit additional data or even truncate the response itself. This may cause slower response times for the client and increased fallback to TCP, which puts an additional load on the server. In some cases middleware may prevent large responses from reaching the clients altoegether.

The aim of our measurements is to obtain insight in the real capabilities of currently deployed resolvers to receive large responses and its relation to the resolvers signalling EDNS capability and/or requesting the larger DNSSEC responses.

Methodology

As explained in "Preparing K-root for a Signed Root Zone" , we have deployed the OARC reply-size tester on machines co-located with the five global instances of K-root (Amsterdam, London, Frankfurt, Tokyo, Miami) with some extensions. We have asked you to test against that with either a java tool or simple DNS queries. We have also caused some visitors to the RIPE NCC website to automatically execute this test. We can now report the first results, based on 685,547 measurements taken between 12-31 January 2010. These measurements came from resolvers at 43,060 distinct source addresses. We decided to report these first results in terms of the number of measurements because we do not fully understand the relationship of source addresses to distinct resolvers.

Measured Transfer Sizes

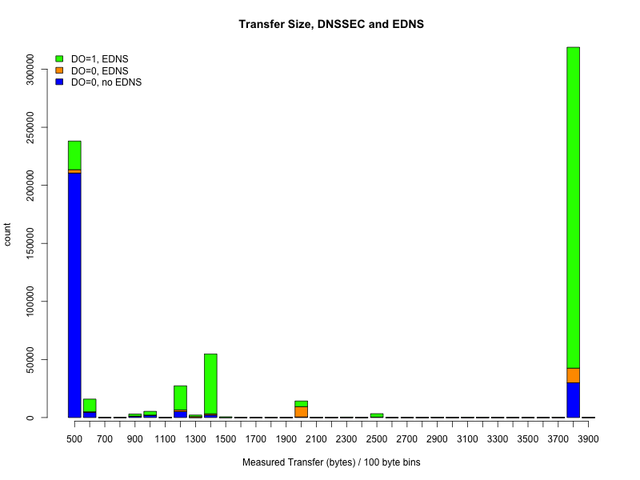

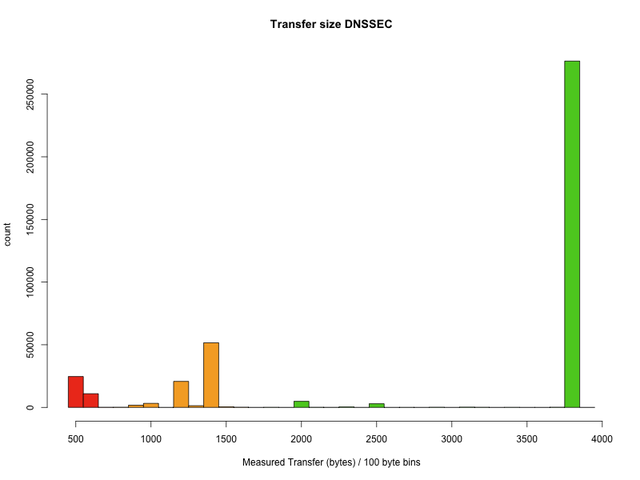

The graph below shows the number of measurements for 100-byte bins of transfer sizes we measured:

As expected there is a peak around 400-500 bytes representing mostly classical non-EDNS capable resolvers. Please note that the transfer sizes are not measured exactly to the byte by our tool and that measurements are influenced by dropped packets too; this explains the fact that there are no totally empty bins in this measurement. The vast majority of the 400-500 byte measurements neither announce EDNS capability nor request DNSSEC responses; these resolvers will not cause any problems to a DNSSEC enabled K-root. There is a small number of these resolvers that do request DNSSEC responses; these may try to fall back to TCP for longer responses. [ UPDATE: we updated this graph from the original article, it incorrectly had a 'DO=1,noEDNS' label for 'DO=0,EDNS' ]

The really good news is that there is another even higher peak at the 3800 byte bin; this represents mostly EDNS capable resolvers with a 4096 byte transfer size. In this measurement, these resolvers are already in the majority. In general these will not cause problems for a DNSSEC enabled K-root. Interestingly, some of these resolvers which are capable to receive large responses do not announce an EDNS capability which will cause DNS servers to truncate responses; we will see if it is possible to further characterise those resolvers in order to cause them to be configured correctly.

The third noticeable feature of the distribution is a significant number of observed transfer sizes between 1000 and 1400 bytes. These values are close to frequently used layer 2 frame sizes. This suggests that in some places the real transfer size is limited around these values either by configuration or possibly by middleware preventing UDP fragmentation. The relative number of these measurements is much lower than the other two peaks. Almost all of these measurements show EDNS capability. If the EDNS buffer size is configured correctly these resolvers should not cause any problems for a DNSSEC enabled K-root.

Announced EDNS Buffer Sizes

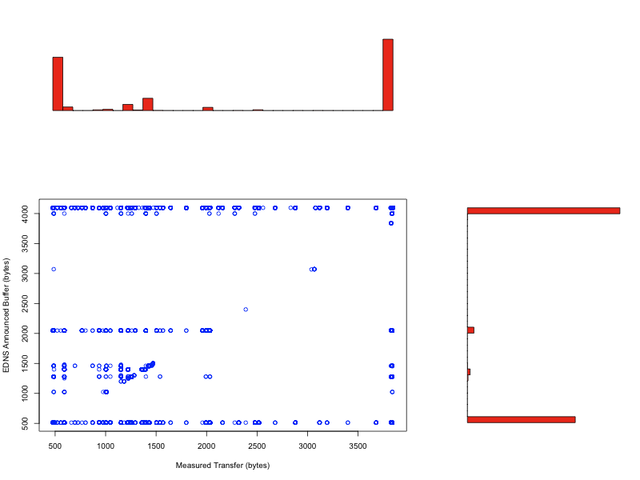

The next graph shows how the measured transfer size relates to the buffer size announced via EDNS. Measurements without EDNS capability are counted as announcing 512 bytes here.

The announced buffer sizes are clearly bimodal at 512 bytes and 4096 bytes, with a small peak at 2048 bytes and just a smidge at the 1000-1400 byte sizes. Ideally the measured transfer size closely follows the announced buffer size, a diagonal line in the scatter plot. Unfortunately this is not the case in practice as we measure it; instead the scatter plot shows clearly that the configured buffer sizes do not match the real capabilities in a significant number of cases.

The measurements below the diagonal mean that the buffer size which the resolver announces via EDNS is smaller than what it can really transfer. This is suboptimal because it causes the server to truncate responses where this would not otherwise be necessary. This may lead to retries using TCP. However, the DNS protocol will still function correctly and the resolver will receive its full answer quickly.

The measurements above the diagonal mean that the buffer size that the resolver announces via EDNS is larger than what can actually be transferred successfully to the resolver. These cases are critical because as responses get longer, they will cause the server to send responses that never actually arrive at the resolver. This will cause re-transmissions of queries and delays at best and time-outs at worst. Eventually it will also cause re-tries using TCP.

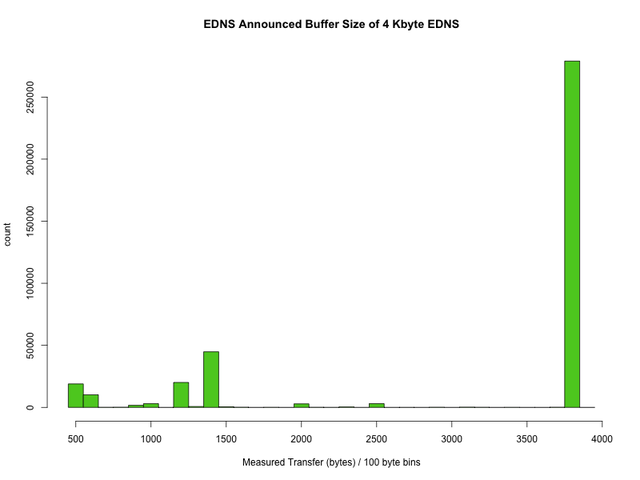

Let us look at these in more detail. The graph below shows the measured transfer sizes for all those queries that announced a 4K buffer size via EDNS:

The vast majority here is OK. Nit: Ideally the size should be configured just a little lower, to be on the safe side, but in practice this will make no difference unless the response size is very close to the limit. All resolvers below the peak on the right will experience delays and failures when the responses get bigger than their real capability, unless they lower their announced buffer size to reflect what can actually be transferred to them. How would the operators know to do this? Run the reply size tester or the DIY query as explained in "Preparing K-root for a Signed Root Zone" . We are also looking into publishing a list of resolver addresses and/or notifying the resolver operators - stay tuned!

What causes the real transfer capability to be lower than the announced one? Well, misconfiguration of course! My personal guess is that the bumps at 2000 and 2500 may be operating system related buffer limits. The peaks below 1500 may be L2 frame size or other MTU related effects and in a large part due to middleware that prevents UDP fragmentation. But that is just an informed guess and needs verification.

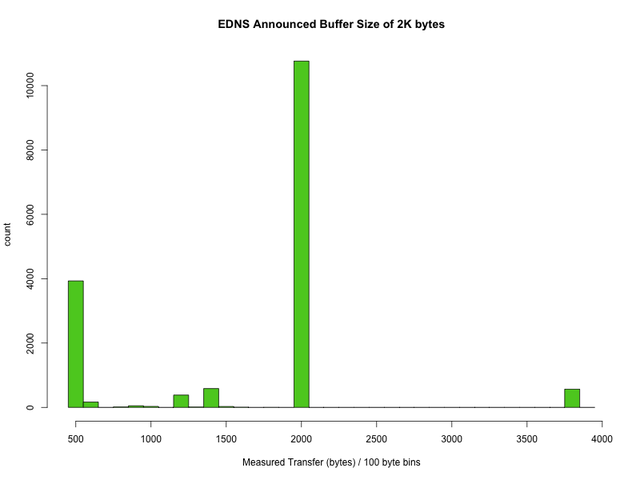

Just to be complete, here is the same graph for those resolvers than announce a buffer size of 2048 via EDNS:

No real surprises here. The bump at 3800 is harmless but the 500 byte peak is much higher. I hope that this may give a good clue for profiling these cases.

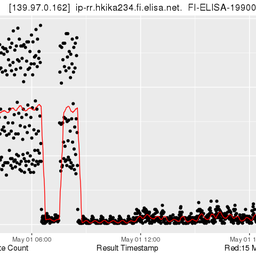

One should note that the measurements around 500 bytes for hosts that do request DNSSEC responses - pun intended - are bound to be the most critical cases. The graph below shows the measured transfer sizes for those requests that had the DO bit set:

The good news is that the vast majority of measurements yield transfer sizes that will fit current DNSSEC answers from root name servers. However, the measurements coloured red indicate transfer sizes that will be too small for at least some of the current responses. Once K-root is DNSSEC enabled, resolvers with these results will often either receive incomplete responses and possibly re-try via TCP or, if they announce too large a buffer via EDNS, they will receive no response at all. If the resolver is located behind a firewall that blocks TCP and limits DNS UDP packets, they may cease to function correctly. Measurements coloured orange represent resolvers that may run into problems in key rollover scenarios or later on when key lengths increase.

Further work

We will investigate how we can give concrete warnings and notices to resolver operators whose resolvers continue to show up as critical in the measurements.

We will look into characterising some peaks in the scatter plot with the aim of identifying the concrete software, configuration and firewall setups that cause them. We will shortly publish another article with examples, in order to get your help in characterising common cases. If we are successful we will issue configuration advice based on the results.

We will look into the distribution of the measurements across K-root instances to see if there are interesting differences.

We have observed some source addresses with varying EDNS buffer size announcements within a short time interval and even some source addresses with bi-modal or tri-modal transfer size measurements within a short time interval. We will try to characterise these and find out what causes these unexpected results. Once we understand this better we will try to analyse the data in terms of distinct resolvers rather than measurements.

Conclusions

The vast majority of measurements are from resolvers that are ready and will continue to function when K-root starts providing DNSSEC answers to resolvers that request it. There are some resolvers that could experience time-outs and delays due to misconfigurations and middleware.

Measurements: Wolfgang Nagele based on DNS OARC tool by Duane Wessels

Analysis: René Wilhelm, Daniel Karrenberg

Text: Daniel Karrenberg

Comments 0

The comments section is closed for articles published more than a year ago. If you'd like to inform us of any issues, please contact us.